In earlier blog post Dapr - Agent Integrations & .NET Aspire, I talked about how Agent Integrations augments and enhances other agentic frameworks e.g., CrewAI, LangGraph, Strands Agents, Microsoft Agent Framework, Google ADK, OpenAI Agents, Pydantic AI, Deep Agents by providing them with key capabilities that production systems demand.

In this blog post, I will take things a step further and foucs on Dapr Agents, Dapr's own native agent framework. If Agent Integrations are about bringing production-readiness to your existing framework, Dapr Agents is about building with production-readiness from the very beginning. It is a Python framework that sits directly on top of Dapr's building blocks using Dapr Workflows for durable, long-running execution, the State Management API for portable agent memory, Pub/Sub and Service Invocation for reliable and secure agent-to-agent communication, and secure agent identity as a default, not a configuration.

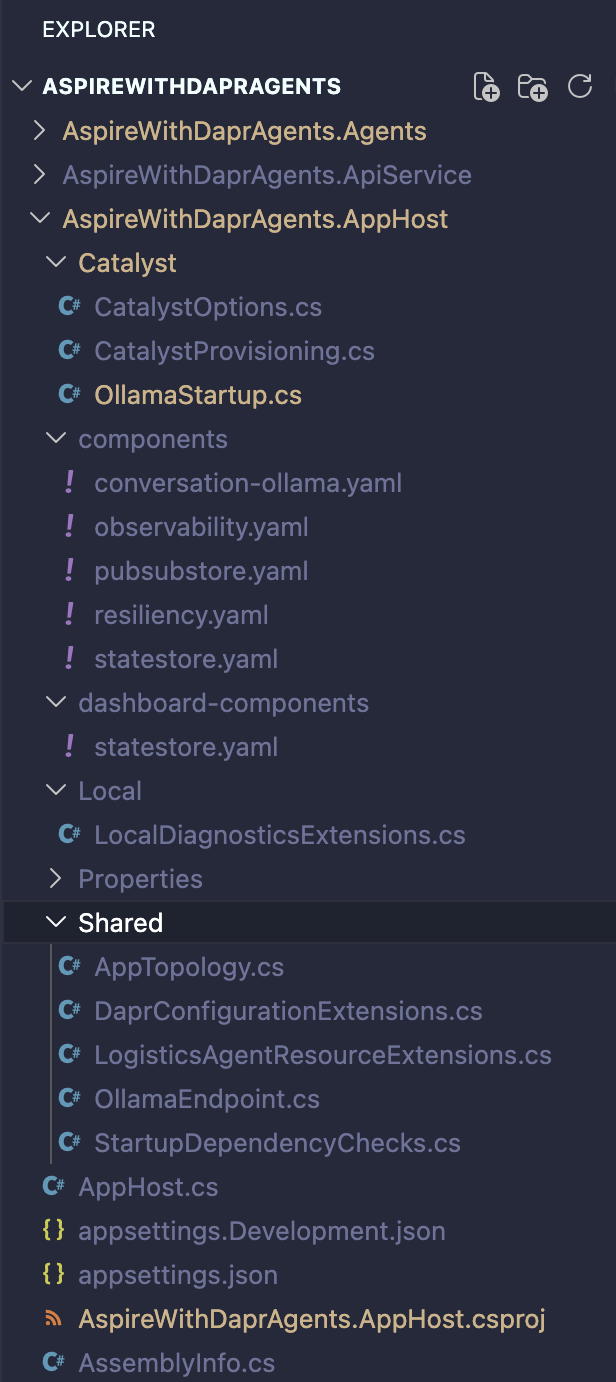

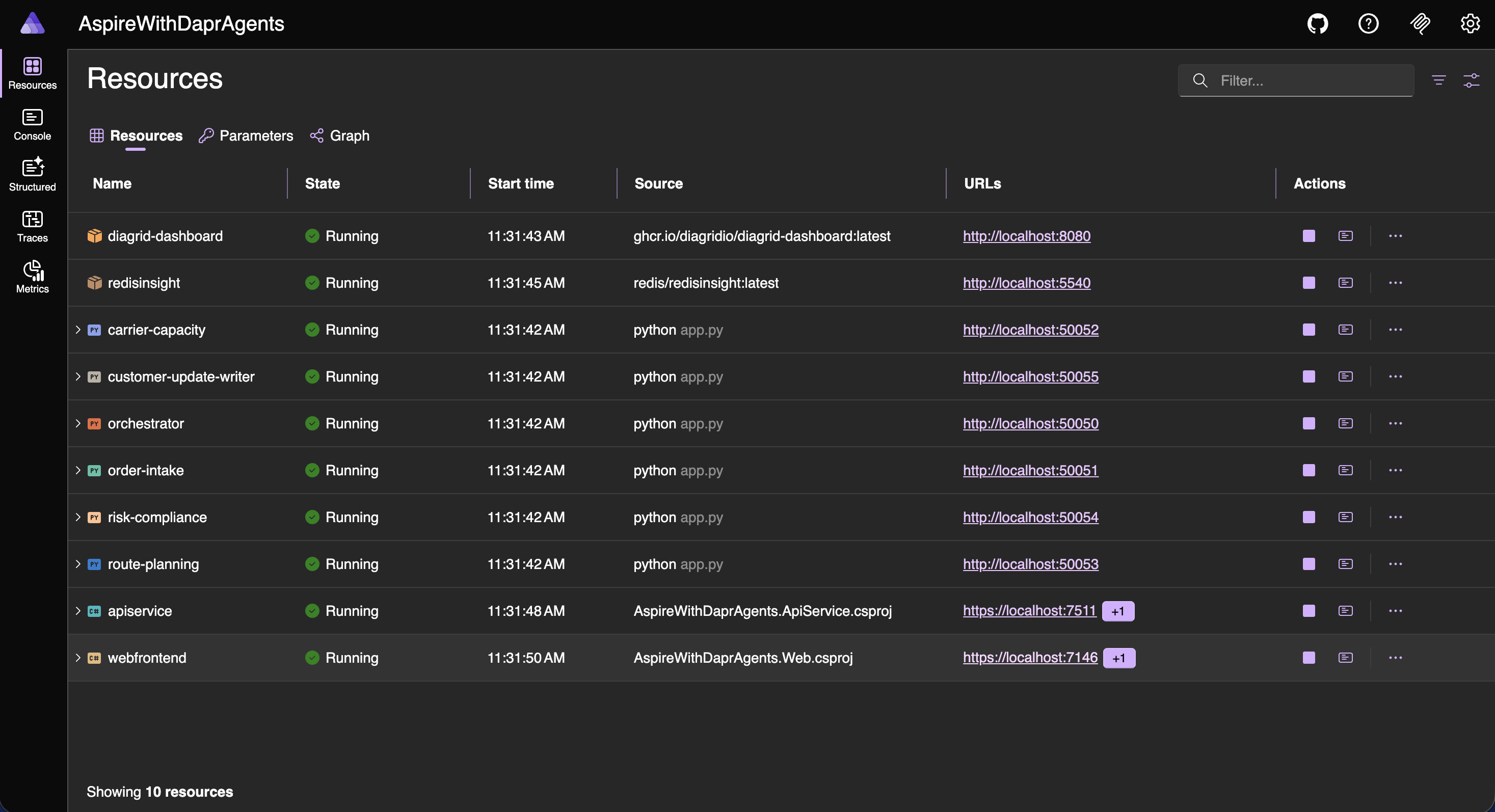

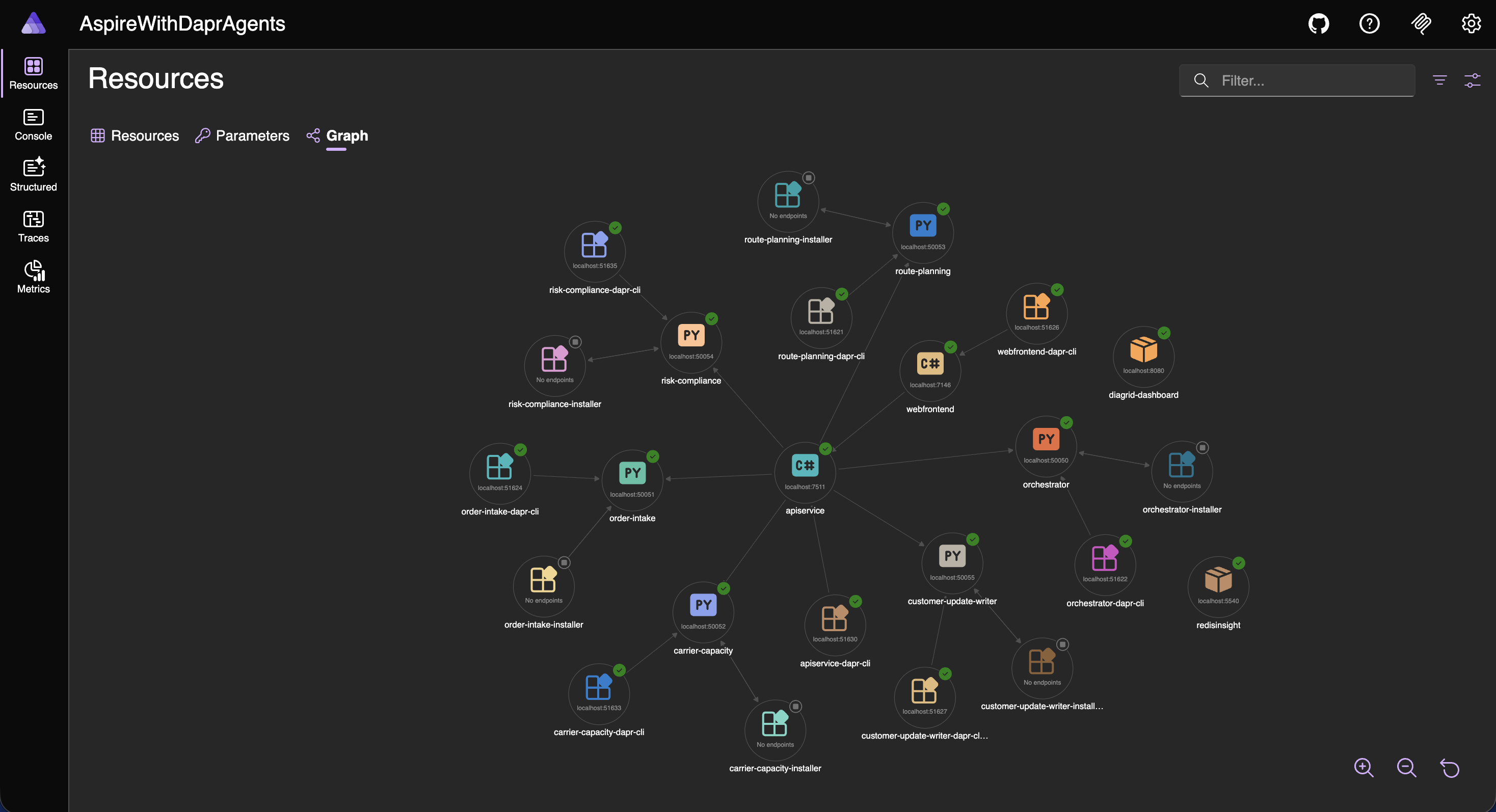

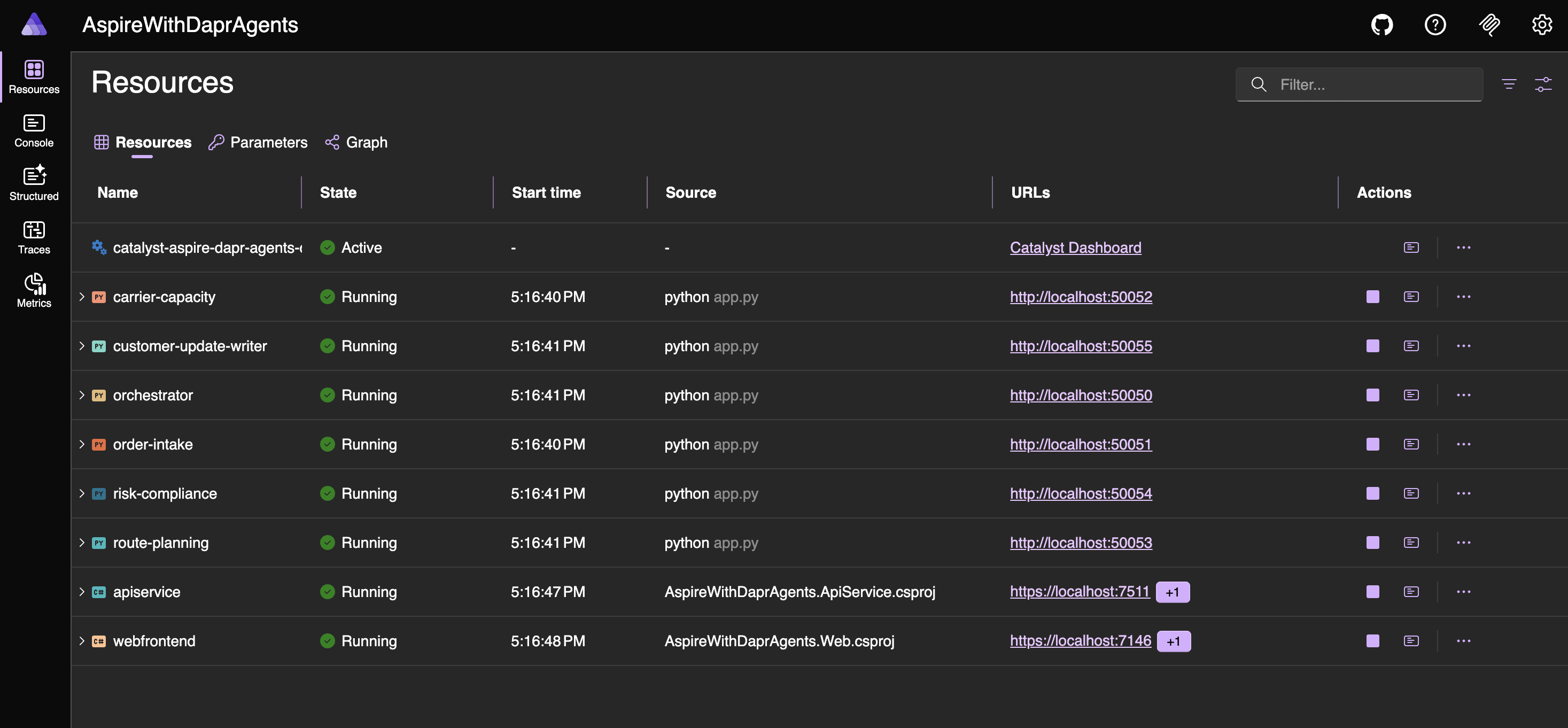

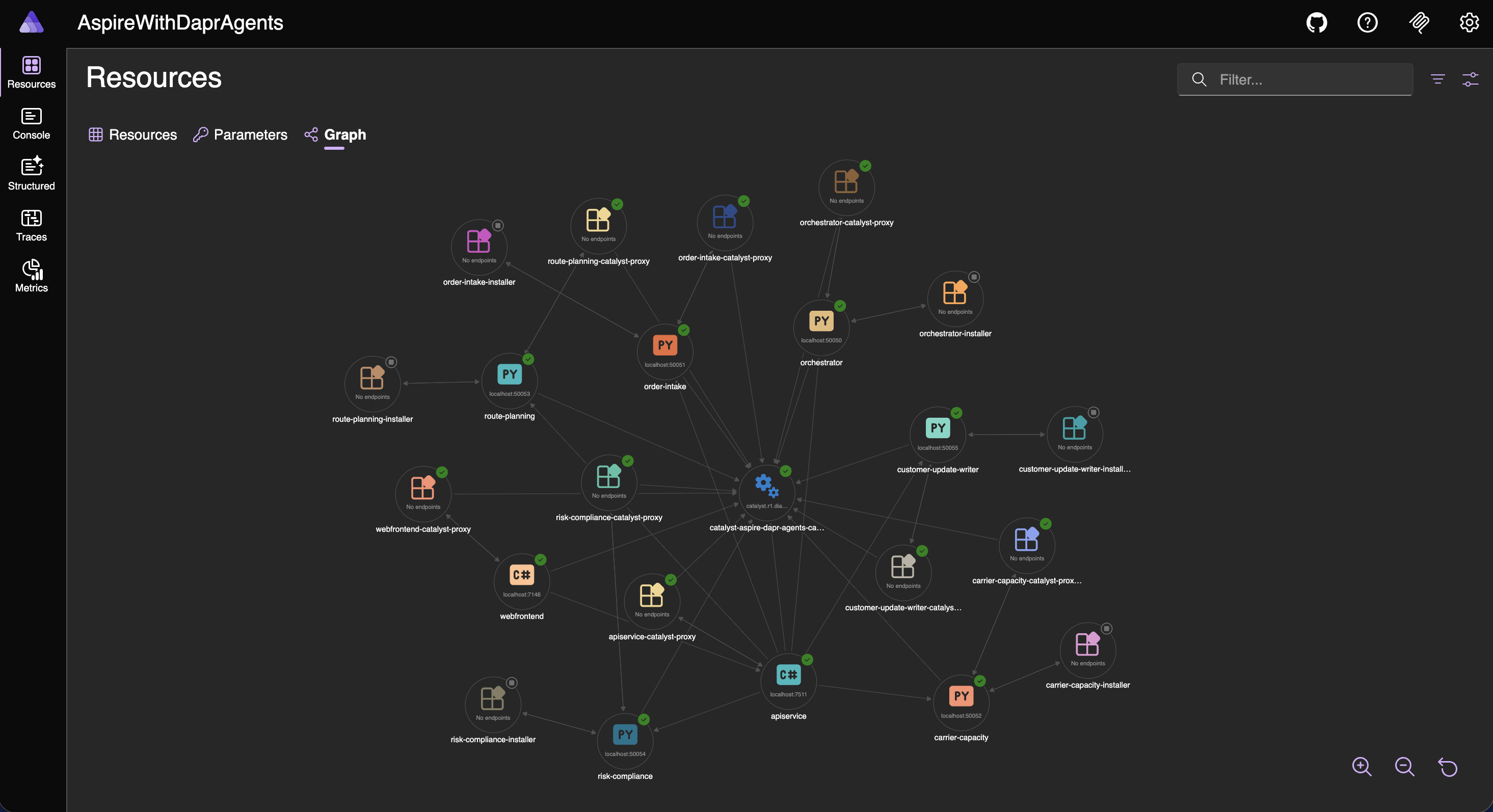

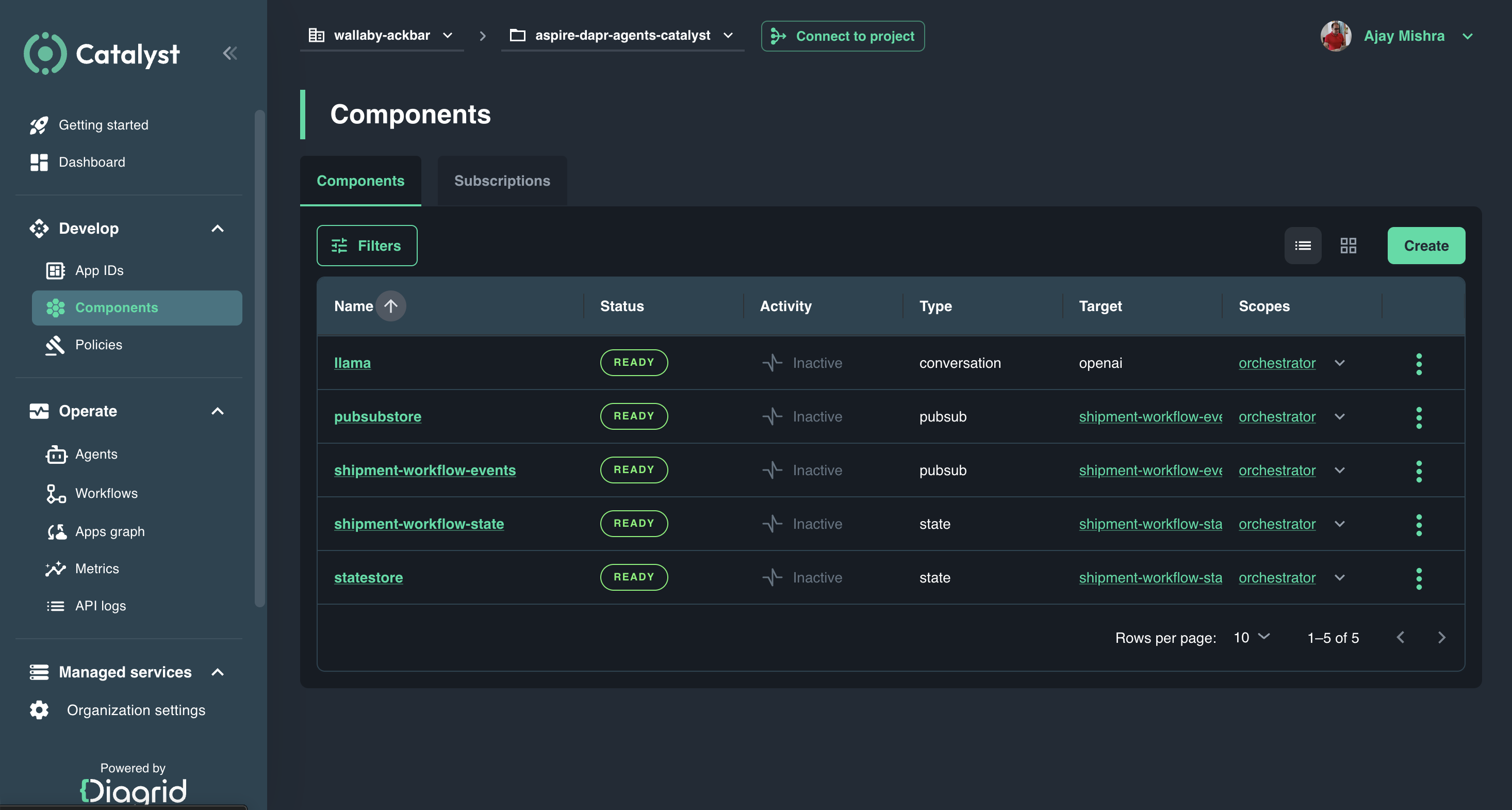

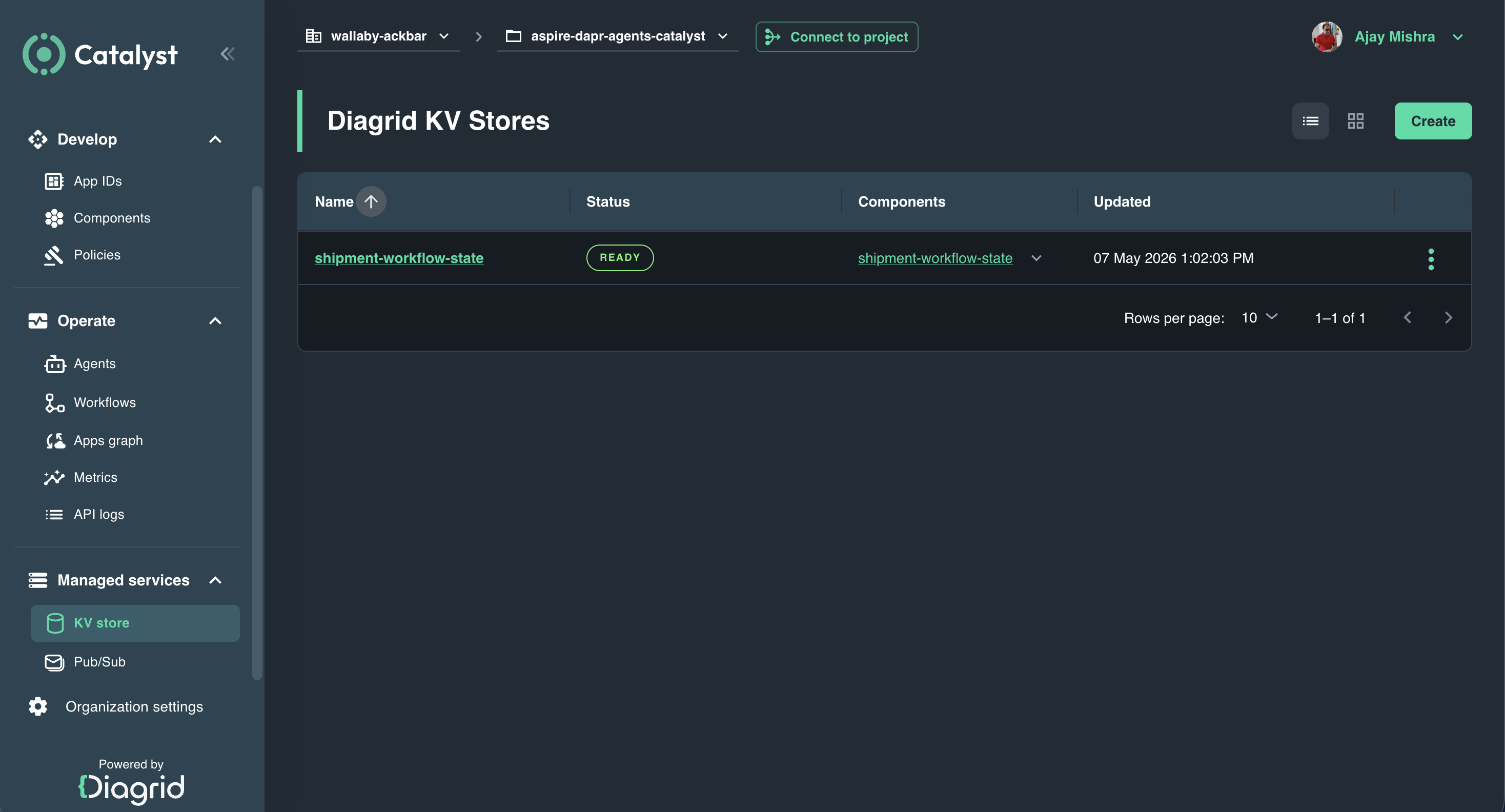

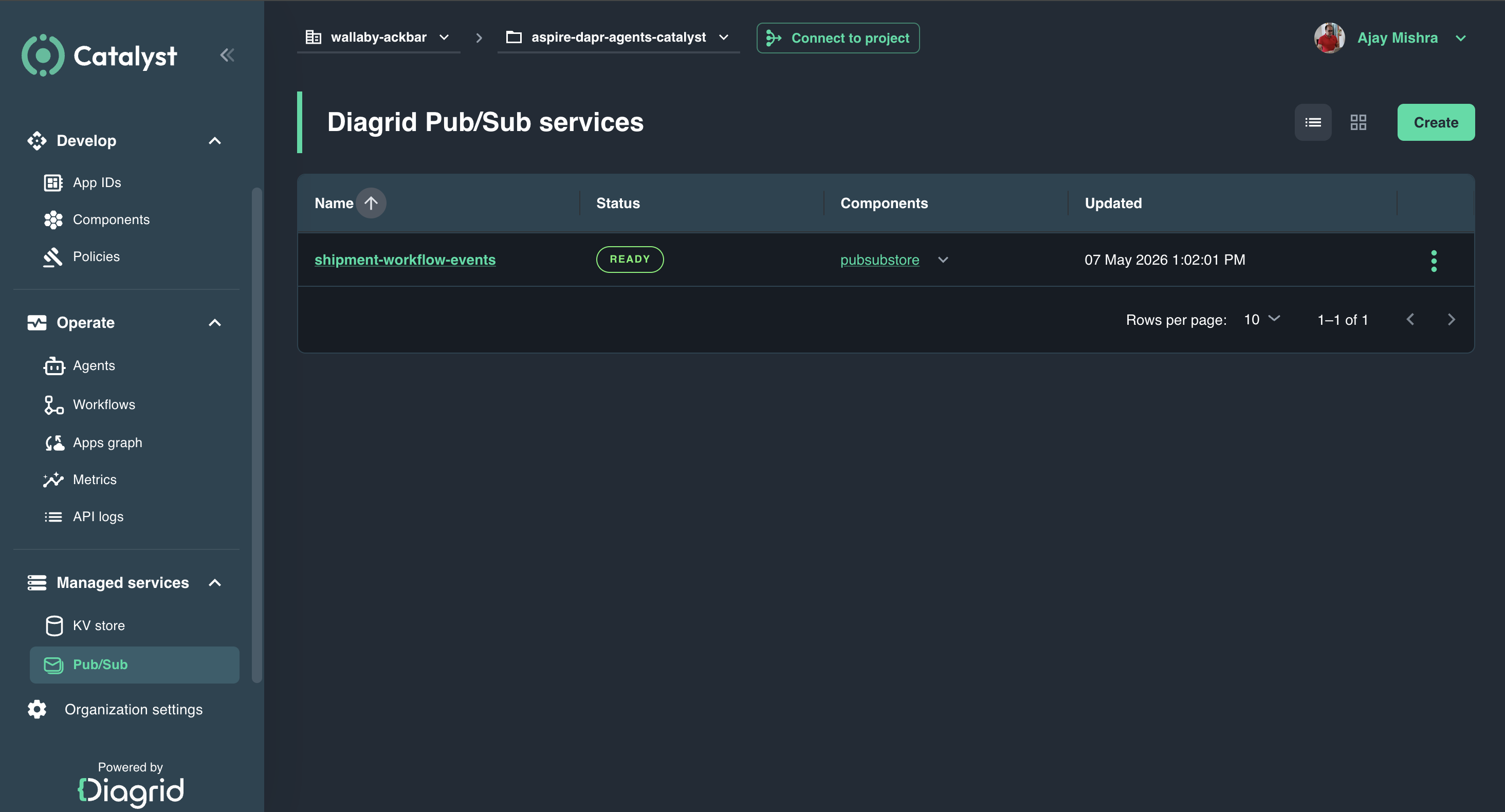

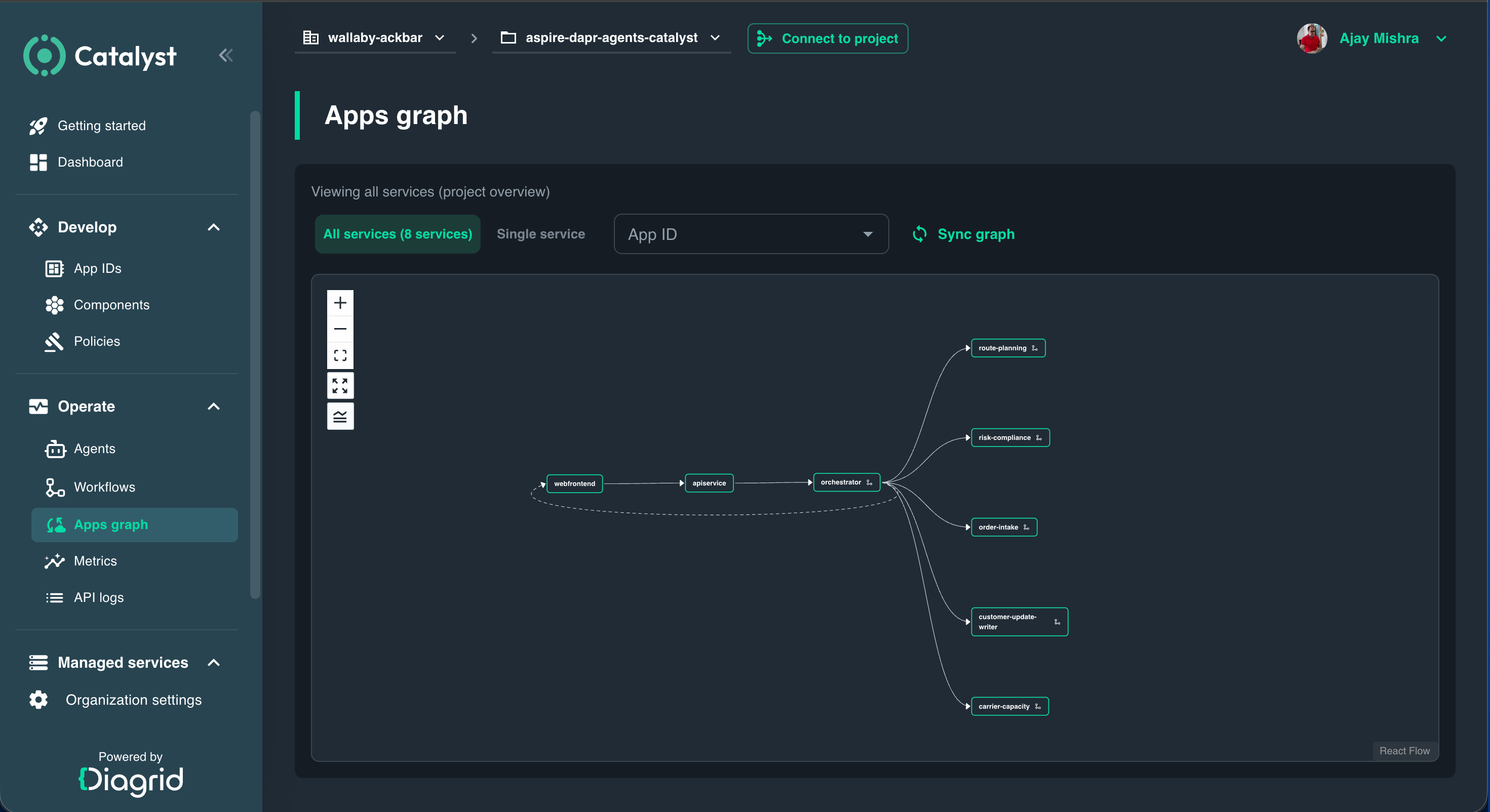

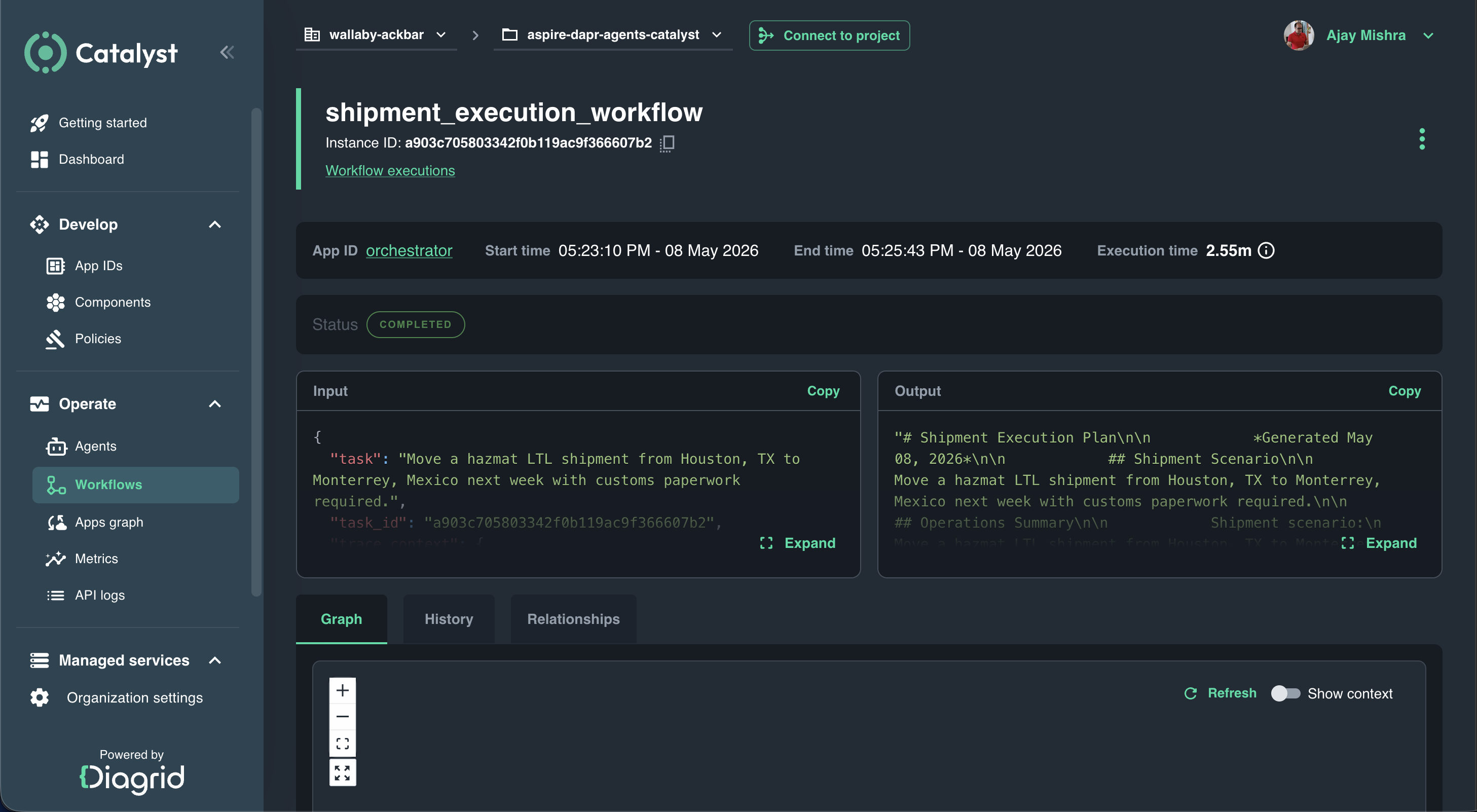

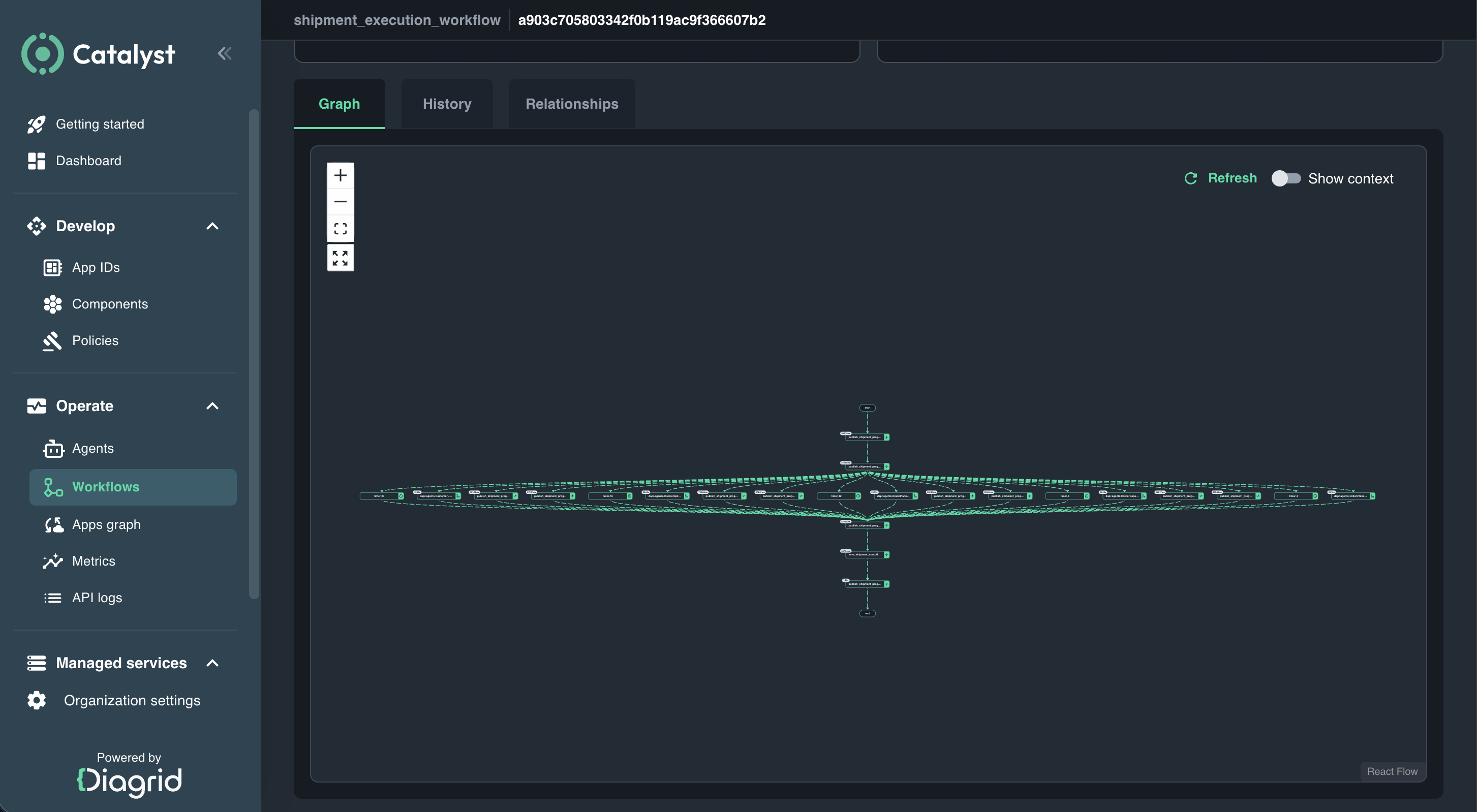

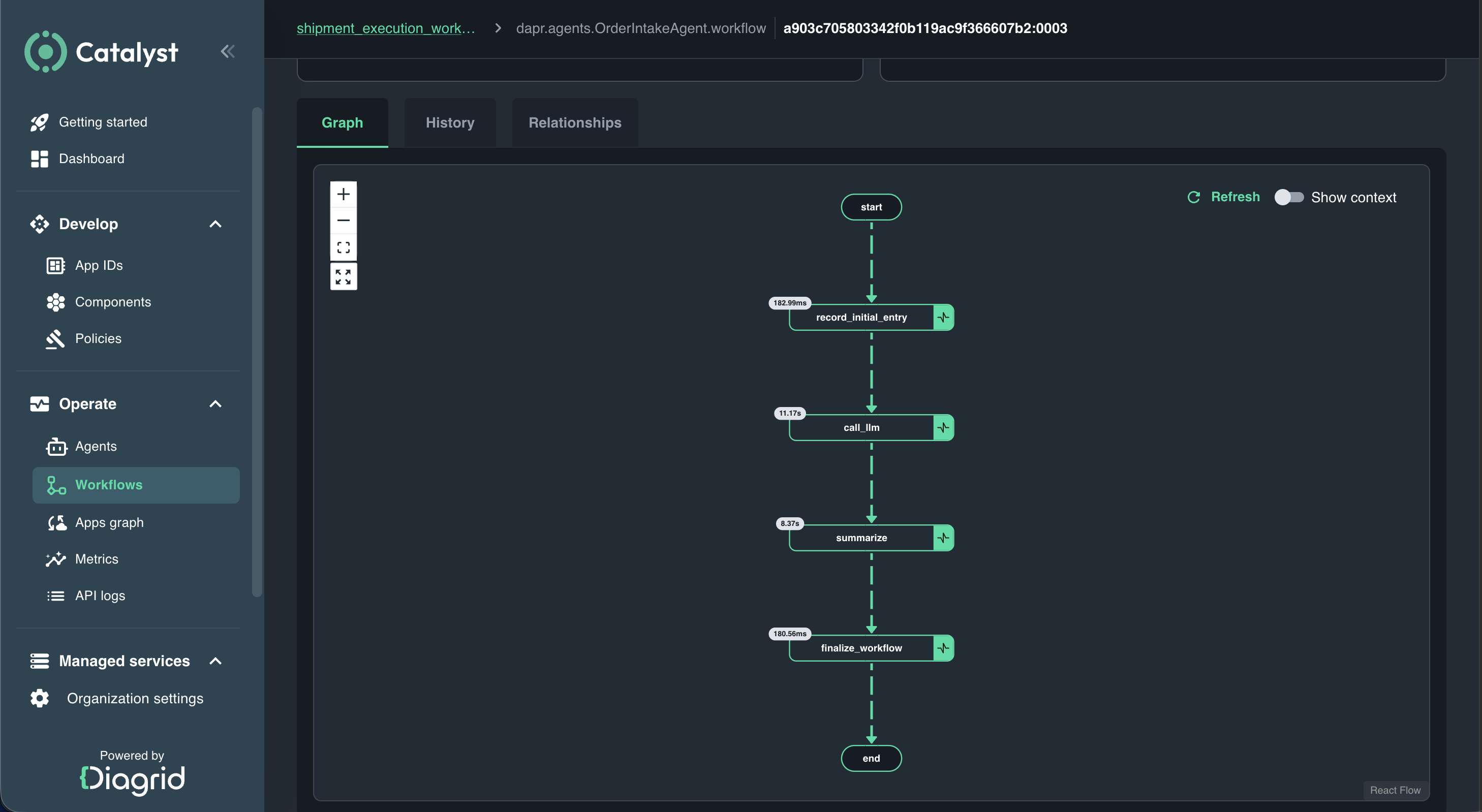

Aspire (formerly .NET Aspire) and Diagrid Catalyst play important supporting roles in this story. Aspire is a multi-language local dev-time orchestration toolchain that lets you define your entire stack frontends, APIs, containers, databases, and Dapr sidecars in code, with built-in OpenTelemetry delivering logs, traces, and health checks automatically, making it significantly easier to move from a local prototype to a resilient distributed application. Catalyst, on the other hand, is a centralized platform for running and operating the Dapr runtime as-a-service in production handling operational automation, scaling, zero-trust security, governance, and full visibility into every agent execution and workflow trace.

Prerequisites

- .NET 10

- Python 3.14

- uv 0.11

- Dapr Agent 1.0

- Dapr Runtime 1.17.5

- Dapr CLI 1.16.5

- Docker for Deskop

- Ollama & llama3.2

- ngrok 3.39.1

- Aspire 13.3.0

- Diagrid Catalyst Account

Demo App

The demo app is based on the Aspire starter template, which includes a frontend (ASP.NET Core Blazor App), a backend (ASP.NET Core Minimal API), Service Defaults, and an AppHost project to demonstrate Aspire's capabilities.

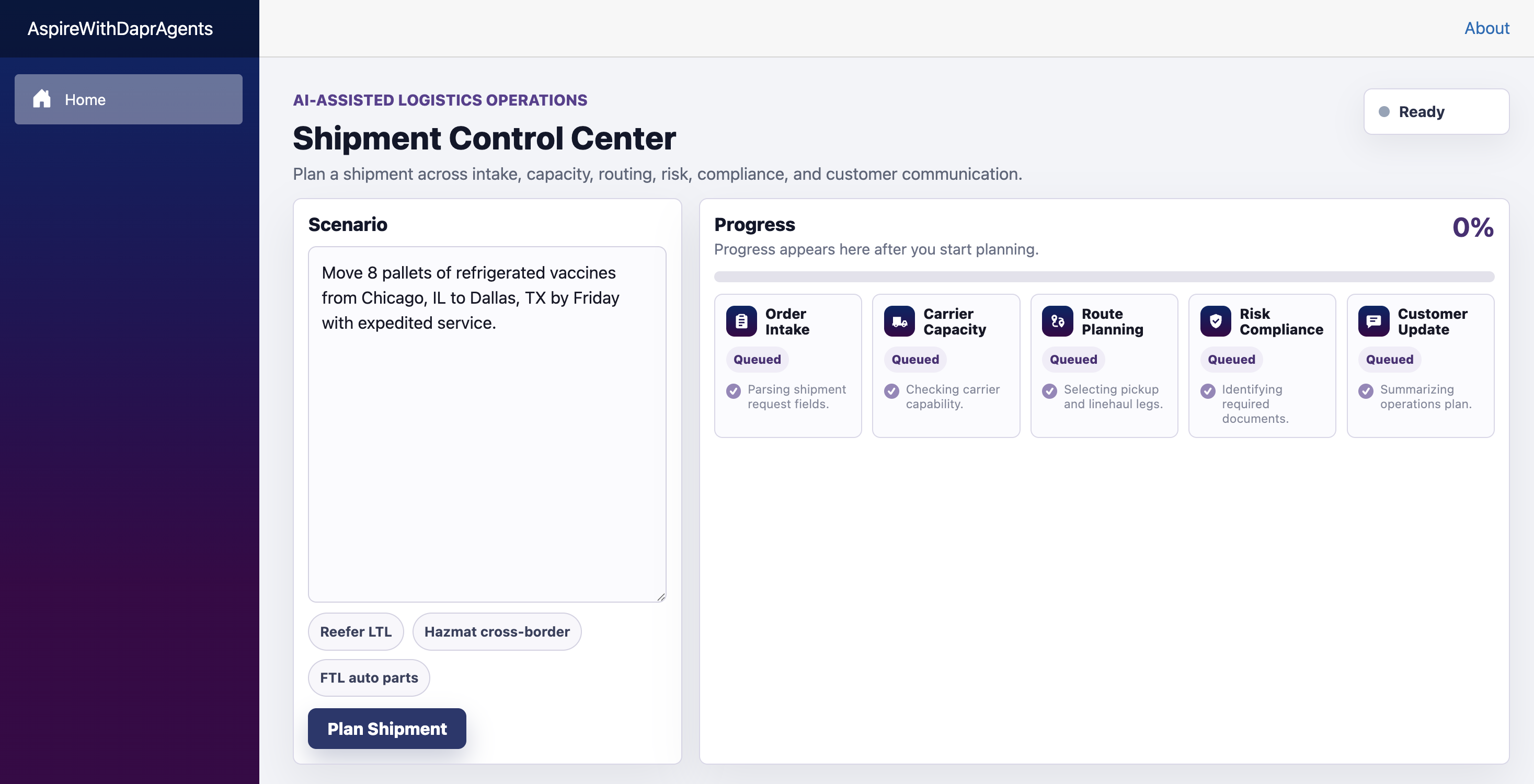

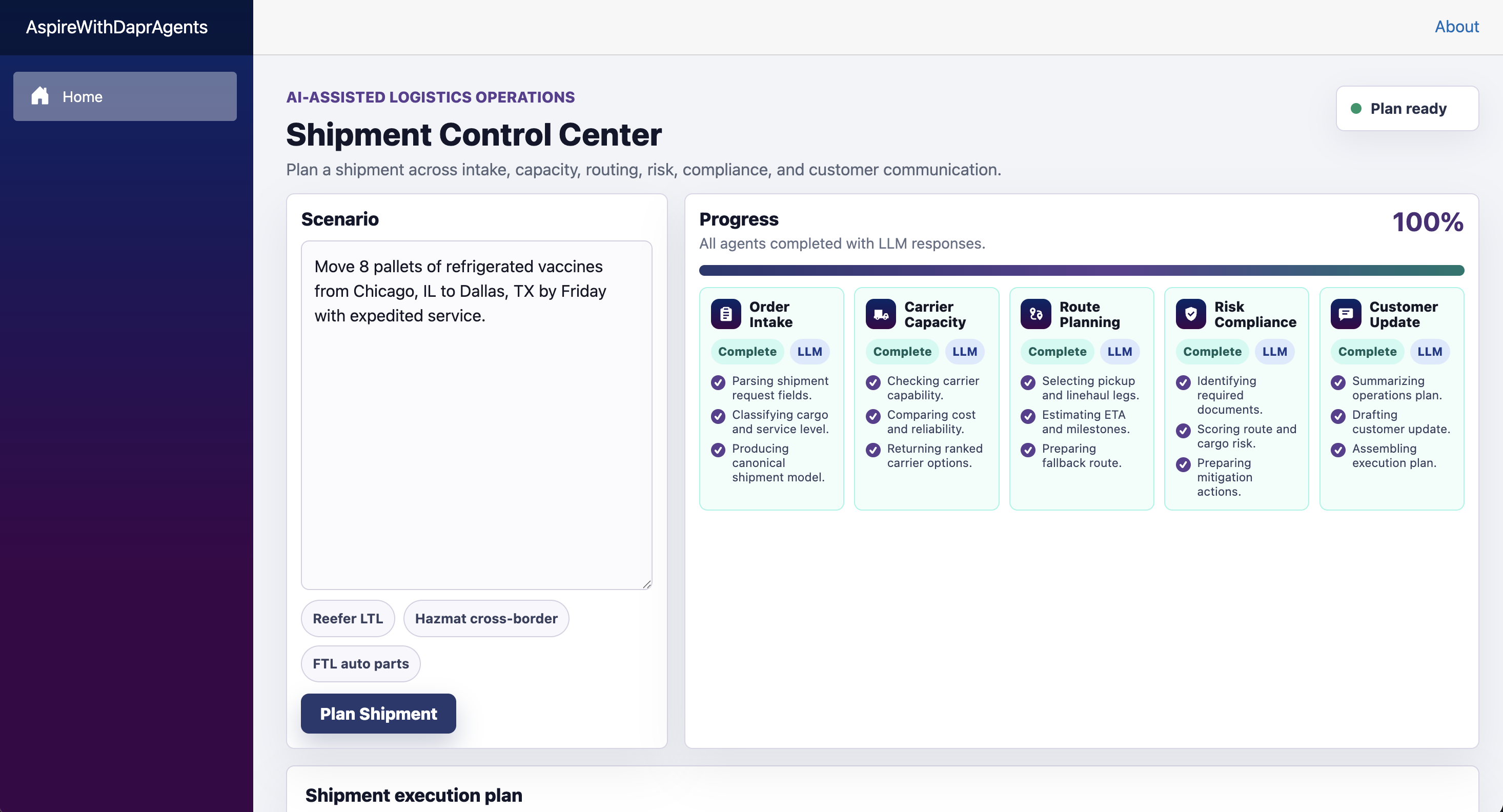

The App acts as a Shipment Control Center - AI-Assisted Logistics Operations, accepts a shipment scenario from a Blazor web frontend and sends it through an API gateway to a Dapr Workflow orchestrator. The orchestrator coordinates five Python-based Dapr Agents to generate a complete shipment execution plan with real-time progress updates.

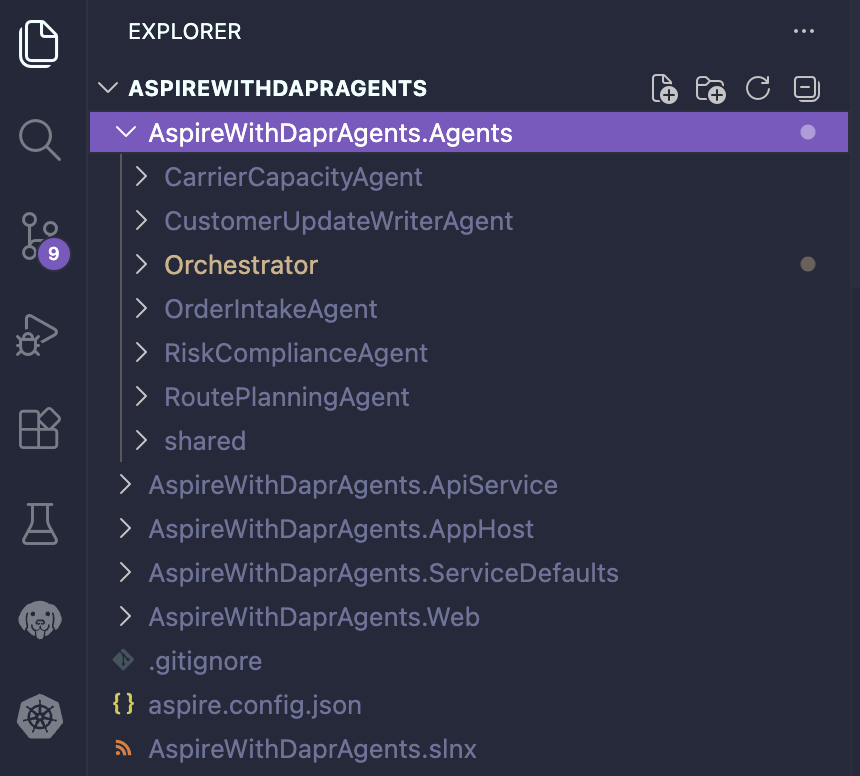

The Order Intake Agent normalizes the raw shipment request into a canonical shipment profile. The Carrier Capacity Agent evaluates feasible carriers, capacity, equipment, and booking options. The Route Planning Agent creates route legs, milestones, alternative paths, and ETA details. The Risk Compliance Agent validates documents, restrictions, operational risks, and mitigation strategies. Finally, the Customer Update Writer Agent converts the planning brief into an operations-ready execution plan along with a customer-facing update.

The image below depicts the application's Shipment Control Center UI.

NOTE: Considering a .NET stack, the Orchestrator can be written in .NET while keeping all Agent code in Python, as Dapr Agents currently only support Python. Alternatively, all Agents could be rewritten using the Microsoft Agent Framework, leveraging Dapr–Agent integration to make them production-ready. This may be demonstrated in an upcoming blog post.

Dapr-ization of the App

Refer. to the Dapr-ization of the App section in Diagrid Catalyst & .NET Aspire to configure the app to use the Dapr sidecar.

Start with Workflow & Agents

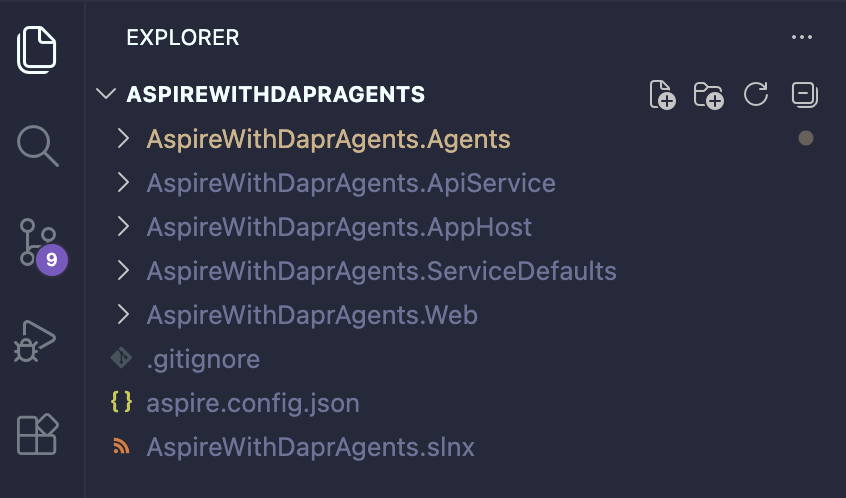

Since the application's basic structure is ready, let’s start creating the Agents folders and subfolders as shown below.

Orchestrator - Workflow

The orchestrator is responsible for managing the complete cross-agent business workflow. It starts each child workflow using the appropriate Dapr app ID and workflow name, waits for each workflow to complete, and reads the resulting output. It also validates whether the output is usable and handles retries or fallback logic when an agent times out, fails, or returns unusable final content. In addition, the orchestrator builds the input for the next agent based on the outputs of previous agents, publishes progress events throughout the execution lifecycle, and ultimately returns the finalized execution plan to the API. The orchestrator is not merely a routing component; it owns and manages the end-to-end cross-agent business process.

# pyproject.toml

[project]

name = "agent-orchestrator"

version = "0.1.0"

requires-python = ">=3.11,<3.14"

dependencies = [

"dapr-agents==1.0.0",

"dapr==1.17.3",

"opentelemetry-api==1.41.1",

"opentelemetry-sdk==1.41.1",

"opentelemetry-exporter-otlp-proto-grpc==1.41.1",

"python-dotenv==1.0.0",

"uvicorn[standard]==0.31.1",

]# app.py

from __future__ import annotations

import asyncio

import warnings

from datetime import datetime, timezone

from typing import Any

import dapr.ext.workflow as wf

from dapr.clients import DaprClient

from fastapi import Body, FastAPI, HTTPException

from config import READINESS_APP_IDS, logger

from shared.observability import current_trace_context, instrument_fastapi

from readiness import _check_agent_readiness, _check_chat_component_readiness, _check_workflow_readiness, _readiness_entry

from workflow_helpers import log_stage

def _get_task_from_body(body: dict[str, Any] | None) -> str:

task = body.get("task") if isinstance(body, dict) else None

if not isinstance(task, str) or not task.strip():

raise HTTPException(status_code=422, detail="A non-empty 'task' field is required.")

return task.strip()

def _get_requested_instance_id(body: dict[str, Any] | None) -> str | None:

task_id = body.get("task_id") if isinstance(body, dict) else None

if not isinstance(task_id, str) or not task_id.strip():

return None

return task_id.strip()

def _get_trace_context_from_body(body: dict[str, Any] | None) -> dict[str, str]:

trace_context = body.get("trace_context") if isinstance(body, dict) else None

if not isinstance(trace_context, dict):

return {}

return {str(key): str(value) for key, value in trace_context.items() if value}

def _workflow_status_url(instance_id: str) -> str:

return f"/agent/instances/{instance_id}"

def create_app() -> FastAPI:

app = FastAPI(title="Shipment Execution Workflow Service", version="1.0.0")

instrument_fastapi(app)

workflow_client = wf.DaprWorkflowClient()

@app.get("/health")

async def health() -> dict[str, str]:

return {"status": "ok"}

@app.get("/agent/readiness")

async def readiness() -> dict[str, Any]:

checks = [_readiness_entry("orchestrator", True, "ok")]

results = await asyncio.gather(

*[

asyncio.to_thread(_check_agent_readiness, app_id)

for app_id in READINESS_APP_IDS

if app_id != "orchestrator"

]

)

checks.extend(results)

checks.append(await asyncio.to_thread(_check_workflow_readiness, workflow_client))

checks.append(await asyncio.to_thread(_check_chat_component_readiness))

return {

"ready": all(check["ready"] for check in checks),

"checkedAt": datetime.now(timezone.utc).isoformat().replace("+00:00", "Z"),

"dependencies": checks,

}

@app.post("/agent/run")

async def start_workflow(body: dict[str, Any] = Body(default_factory=dict)) -> dict[str, str]:

task = _get_task_from_body(body)

requested_instance_id = _get_requested_instance_id(body)

trace_context = _get_trace_context_from_body(body) or current_trace_context()

log_stage(

"run request received",

task_id=requested_instance_id or "auto",

body_keys=sorted(body.keys()) if isinstance(body, dict) else [],

)

if requested_instance_id:

existing_state = await asyncio.to_thread(

workflow_client.get_workflow_state,

requested_instance_id,

fetch_payloads=False,

)

if existing_state is not None:

logger.info(

"Workflow instance %s already exists; returning existing status URL.",

requested_instance_id,

)

return {

"instance_id": requested_instance_id,

"status_url": _workflow_status_url(requested_instance_id),

}

workflow_input = {"task": task, "task_id": requested_instance_id, "trace_context": trace_context}

def start_via_dapr_runtime() -> str:

with warnings.catch_warnings():

warnings.simplefilter("ignore", UserWarning)

dapr_client = DaprClient()

response = dapr_client.start_workflow(

workflow_component="dapr",

workflow_name="shipment_execution_workflow",

input=workflow_input,

instance_id=requested_instance_id,

)

return response.instance_id

instance_id = await asyncio.to_thread(start_via_dapr_runtime)

return {

"instance_id": instance_id or "",

"status_url": _workflow_status_url(instance_id or ""),

}

@app.get("/agent/instances/{instance_id}")

async def get_status(instance_id: str) -> dict[str, Any]:

workflow_state = await asyncio.to_thread(

workflow_client.get_workflow_state,

instance_id,

fetch_payloads=True,

)

if workflow_state is None:

raise HTTPException(status_code=404, detail="Workflow instance not found.")

payload = workflow_state.to_json()

payload["runtime_status"] = getattr(

workflow_state.runtime_status,

"name",

str(workflow_state.runtime_status),

)

for field in ("created_at", "last_updated_at"):

ts = payload.get(field)

if ts:

payload[field] = ts.isoformat()

return payload

return app# api.py

from __future__ import annotations

import asyncio

import warnings

from datetime import datetime, timezone

from typing import Any

import dapr.ext.workflow as wf

from dapr.clients import DaprClient

from fastapi import Body, FastAPI, HTTPException

from config import READINESS_APP_IDS, logger

from shared.observability import current_trace_context, instrument_fastapi

from readiness import _check_agent_readiness, _check_chat_component_readiness, _check_workflow_readiness, _readiness_entry

from workflow_helpers import log_stage

def _get_task_from_body(body: dict[str, Any] | None) -> str:

task = body.get("task") if isinstance(body, dict) else None

if not isinstance(task, str) or not task.strip():

raise HTTPException(status_code=422, detail="A non-empty 'task' field is required.")

return task.strip()

def _get_requested_instance_id(body: dict[str, Any] | None) -> str | None:

task_id = body.get("task_id") if isinstance(body, dict) else None

if not isinstance(task_id, str) or not task_id.strip():

return None

return task_id.strip()

def _get_trace_context_from_body(body: dict[str, Any] | None) -> dict[str, str]:

trace_context = body.get("trace_context") if isinstance(body, dict) else None

if not isinstance(trace_context, dict):

return {}

return {str(key): str(value) for key, value in trace_context.items() if value}

def _workflow_status_url(instance_id: str) -> str:

return f"/agent/instances/{instance_id}"

def create_app() -> FastAPI:

app = FastAPI(title="Shipment Execution Workflow Service", version="1.0.0")

instrument_fastapi(app)

workflow_client = wf.DaprWorkflowClient()

@app.get("/health")

async def health() -> dict[str, str]:

return {"status": "ok"}

@app.get("/agent/readiness")

async def readiness() -> dict[str, Any]:

checks = [_readiness_entry("orchestrator", True, "ok")]

results = await asyncio.gather(

*[

asyncio.to_thread(_check_agent_readiness, app_id)

for app_id in READINESS_APP_IDS

if app_id != "orchestrator"

]

)

checks.extend(results)

checks.append(await asyncio.to_thread(_check_workflow_readiness, workflow_client))

checks.append(await asyncio.to_thread(_check_chat_component_readiness))

return {

"ready": all(check["ready"] for check in checks),

"checkedAt": datetime.now(timezone.utc).isoformat().replace("+00:00", "Z"),

"dependencies": checks,

}

@app.post("/agent/run")

async def start_workflow(body: dict[str, Any] = Body(default_factory=dict)) -> dict[str, str]:

task = _get_task_from_body(body)

requested_instance_id = _get_requested_instance_id(body)

trace_context = _get_trace_context_from_body(body) or current_trace_context()

log_stage(

"run request received",

task_id=requested_instance_id or "auto",

body_keys=sorted(body.keys()) if isinstance(body, dict) else [],

)

if requested_instance_id:

existing_state = await asyncio.to_thread(

workflow_client.get_workflow_state,

requested_instance_id,

fetch_payloads=False,

)

if existing_state is not None:

logger.info(

"Workflow instance %s already exists; returning existing status URL.",

requested_instance_id,

)

return {

"instance_id": requested_instance_id,

"status_url": _workflow_status_url(requested_instance_id),

}

workflow_input = {"task": task, "task_id": requested_instance_id, "trace_context": trace_context}

def start_via_dapr_runtime() -> str:

with warnings.catch_warnings():

warnings.simplefilter("ignore", UserWarning)

dapr_client = DaprClient()

response = dapr_client.start_workflow(

workflow_component="dapr",

workflow_name="shipment_execution_workflow",

input=workflow_input,

instance_id=requested_instance_id,

)

return response.instance_id

instance_id = await asyncio.to_thread(start_via_dapr_runtime)

return {

"instance_id": instance_id or "",

"status_url": _workflow_status_url(instance_id or ""),

}

@app.get("/agent/instances/{instance_id}")

async def get_status(instance_id: str) -> dict[str, Any]:

workflow_state = await asyncio.to_thread(

workflow_client.get_workflow_state,

instance_id,

fetch_payloads=True,

)

if workflow_state is None:

raise HTTPException(status_code=404, detail="Workflow instance not found.")

payload = workflow_state.to_json()

payload["runtime_status"] = getattr(

workflow_state.runtime_status,

"name",

str(workflow_state.runtime_status),

)

for field in ("created_at", "last_updated_at"):

ts = payload.get(field)

if ts:

payload[field] = ts.isoformat()

return payload

return app# config.py

from __future__ import annotations

import copy

import logging

import os

import sys

import warnings

from pathlib import Path

sys.path.append(str(Path(__file__).resolve().parents[1]))

from dapr.conf import settings as dapr_settings

from dapr_agents.tool.workflow import agent_workflow_id

from dotenv import load_dotenv

from shared.observability import configure_observability

import uvicorn

load_dotenv()

def normalize_dapr_endpoint_environment() -> None:

for name in ("DAPR_GRPC_ENDPOINT", "DAPR_HTTP_ENDPOINT"):

value = os.getenv(name)

if value:

normalized_value = value.rstrip("/")

os.environ[name] = normalized_value

setattr(dapr_settings, name, normalized_value)

normalize_dapr_endpoint_environment()

warnings.filterwarnings(

"ignore",

message="http and https schemes are deprecated for grpc.*",

category=UserWarning,

module="dapr.conf.helpers",

)

logging.basicConfig(

level=logging.INFO,

format="%(asctime)s %(levelname)s [%(name)s] %(message)s",

stream=sys.stdout,

force=True,

)

logger = logging.getLogger("orchestrator.app")

configure_observability("orchestrator")

UVICORN_LOG_CONFIG = copy.deepcopy(uvicorn.config.LOGGING_CONFIG)

UVICORN_LOG_CONFIG["handlers"]["default"]["stream"] = "ext://sys.stdout"

UVICORN_LOG_CONFIG["handlers"]["access"]["stream"] = "ext://sys.stdout"

AGENT_PORT = int(os.getenv("AGENT_PORT", "50050"))

CHILD_WORKFLOW_TIMEOUT_SECONDS = int(os.getenv("CHILD_WORKFLOW_TIMEOUT_SECONDS", "120"))

CHILD_WORKFLOW_MAX_ATTEMPTS = int(os.getenv("CHILD_WORKFLOW_MAX_ATTEMPTS", "2"))

CHILD_WORKFLOW_RETRY_FIRST_INTERVAL_SECONDS = int(os.getenv("CHILD_WORKFLOW_RETRY_FIRST_INTERVAL_SECONDS", "5"))

CHILD_WORKFLOW_RETRY_BACKOFF_COEFFICIENT = float(os.getenv("CHILD_WORKFLOW_RETRY_BACKOFF_COEFFICIENT", "2.0"))

ORDER_INTAKE_APP_ID = os.getenv("ORDER_INTAKE_APP_ID", "order-intake")

CARRIER_CAPACITY_APP_ID = os.getenv("CARRIER_CAPACITY_APP_ID", "carrier-capacity")

ROUTE_PLANNING_APP_ID = os.getenv("ROUTE_PLANNING_APP_ID", "route-planning")

RISK_COMPLIANCE_APP_ID = os.getenv("RISK_COMPLIANCE_APP_ID", "risk-compliance")

CUSTOMER_UPDATE_WRITER_APP_ID = os.getenv("CUSTOMER_UPDATE_WRITER_APP_ID", "customer-update-writer")

ORDER_INTAKE_AGENT_NAME = os.getenv("ORDER_INTAKE_AGENT_NAME", "order-intake-agent")

CARRIER_CAPACITY_AGENT_NAME = os.getenv("CARRIER_CAPACITY_AGENT_NAME", "carrier-capacity-agent")

ROUTE_PLANNING_AGENT_NAME = os.getenv("ROUTE_PLANNING_AGENT_NAME", "route-planning-agent")

RISK_COMPLIANCE_AGENT_NAME = os.getenv("RISK_COMPLIANCE_AGENT_NAME", "risk-compliance-agent")

CUSTOMER_UPDATE_WRITER_AGENT_NAME = os.getenv("CUSTOMER_UPDATE_WRITER_AGENT_NAME", "customer-update-writer-agent")

ORDER_INTAKE_WORKFLOW_NAME = os.getenv(

"ORDER_INTAKE_WORKFLOW_NAME",

agent_workflow_id(ORDER_INTAKE_AGENT_NAME),

)

CARRIER_CAPACITY_WORKFLOW_NAME = os.getenv(

"CARRIER_CAPACITY_WORKFLOW_NAME",

agent_workflow_id(CARRIER_CAPACITY_AGENT_NAME),

)

ROUTE_PLANNING_WORKFLOW_NAME = os.getenv(

"ROUTE_PLANNING_WORKFLOW_NAME",

agent_workflow_id(ROUTE_PLANNING_AGENT_NAME),

)

RISK_COMPLIANCE_WORKFLOW_NAME = os.getenv(

"RISK_COMPLIANCE_WORKFLOW_NAME",

agent_workflow_id(RISK_COMPLIANCE_AGENT_NAME),

)

CUSTOMER_UPDATE_WRITER_WORKFLOW_NAME = os.getenv(

"CUSTOMER_UPDATE_WRITER_WORKFLOW_NAME",

agent_workflow_id(CUSTOMER_UPDATE_WRITER_AGENT_NAME),

)

PROGRESS_PUBSUB_NAME = os.getenv("PROGRESS_PUBSUB_NAME", "pubsubstore")

PROGRESS_TOPIC_NAME = os.getenv("PROGRESS_TOPIC_NAME", "shipment-progress")

SHIPMENT_STATE_STORE_NAME = os.getenv("SHIPMENT_STATE_STORE_NAME", "statestore")

CHAT_COMPONENT = os.getenv("DAPR_CHAT_COMPONENT_NAME", "llama")

CHAT_READINESS_CACHE_SECONDS = int(os.getenv("CHAT_READINESS_CACHE_SECONDS", "20"))

CHAT_READINESS_TIMEOUT_SECONDS = int(os.getenv("CHAT_READINESS_TIMEOUT_SECONDS", "8"))

CHAT_READINESS_FAILURE_CACHE_SECONDS = int(os.getenv("CHAT_READINESS_FAILURE_CACHE_SECONDS", "5"))

CHAT_READINESS_MAX_ATTEMPTS = int(os.getenv("CHAT_READINESS_MAX_ATTEMPTS", "1"))

READINESS_APP_IDS = [

"orchestrator",

ORDER_INTAKE_APP_ID,

CARRIER_CAPACITY_APP_ID,

ROUTE_PLANNING_APP_ID,

RISK_COMPLIANCE_APP_ID,

CUSTOMER_UPDATE_WRITER_APP_ID,

]# contracts.py

from __future__ import annotations

from dataclasses import dataclass

@dataclass(frozen=True)

class AgentCallResult:

content: str

source: str

attempts: int

fallback_reason: str = ""

fallback_detail: str = ""# dapr_helper

from __future__ import annotations

import os

def dapr_http_base_url() -> str | None:

dapr_http_endpoint = os.getenv("DAPR_HTTP_ENDPOINT")

dapr_http_port = os.getenv("DAPR_HTTP_PORT")

if dapr_http_endpoint:

return dapr_http_endpoint.rstrip("/")

if dapr_http_port:

return f"http://localhost:{dapr_http_port}"

return None

def dapr_json_headers() -> dict[str, str]:

headers = {"Content-Type": "application/json"}

dapr_api_token = os.getenv("DAPR_API_TOKEN")

if dapr_api_token:

headers["dapr-api-token"] = dapr_api_token

return headers# progress.py

from __future__ import annotations

from datetime import datetime, timezone

import json

import urllib.error

import urllib.request

from typing import Any

from dapr.clients import DaprClient

from config import PROGRESS_PUBSUB_NAME, PROGRESS_TOPIC_NAME, logger

from dapr_helpers import dapr_http_base_url, dapr_json_headers

from workflow_runtime import runtime

def _workflow_progress_event(

task_id: str,

workflow_instance_id: str,

agent: str,

status: str,

message: str,

progress: int,

source: str = "system",

fallback_reason: str = "",

attempts: int = 0,

fallback_detail: str = "",

) -> dict[str, Any]:

event = {

"taskId": task_id,

"workflowInstanceId": workflow_instance_id,

"agent": agent,

"status": status,

"message": message,

"progress": progress,

"source": source,

}

if fallback_reason:

event["fallbackReason"] = fallback_reason

if attempts:

event["attempts"] = attempts

if fallback_detail:

event["fallbackDetail"] = fallback_detail

return event

@runtime.activity(name="publish_shipment_progress")

def publish_shipment_progress(_ctx: Any, event: dict[str, Any]) -> bool:

event = dict(event)

event["timestamp"] = datetime.now(timezone.utc).isoformat().replace("+00:00", "Z")

dapr_base_url = dapr_http_base_url()

if dapr_base_url:

url = f"{dapr_base_url}/v1.0/publish/{PROGRESS_PUBSUB_NAME}/{PROGRESS_TOPIC_NAME}"

request = urllib.request.Request(

url,

data=json.dumps(event).encode("utf-8"),

headers=dapr_json_headers(),

method="POST",

)

try:

with urllib.request.urlopen(request, timeout=3) as response:

if response.status >= 300:

logger.warning("Dapr publish returned HTTP %s for progress event.", response.status)

return False

return True

except (urllib.error.URLError, TimeoutError) as exc:

logger.warning("Failed to publish shipment progress event via HTTP: %s", exc)

return False

try:

with DaprClient() as client:

client.publish_event(

pubsub_name=PROGRESS_PUBSUB_NAME,

topic_name=PROGRESS_TOPIC_NAME,

data=json.dumps(event),

data_content_type="application/json",

)

return True

except Exception as exc:

logger.warning("Failed to publish shipment progress event via Dapr gRPC: %s", exc)

return False# readiness.py

from __future__ import annotations

import json

import threading

import time

import urllib.error

import urllib.request

from typing import Any

from dapr_agents.llm.dapr import DaprChatClient

from config import (

CHAT_COMPONENT,

CHAT_READINESS_CACHE_SECONDS,

CHAT_READINESS_FAILURE_CACHE_SECONDS,

CHAT_READINESS_MAX_ATTEMPTS,

CHAT_READINESS_TIMEOUT_SECONDS,

)

from dapr_helpers import dapr_http_base_url, dapr_json_headers

_chat_readiness_cache_lock = threading.Lock()

_chat_readiness_cache: dict[str, Any] = {

"checked_at": 0.0,

"result": None,

}

def _readiness_entry(name: str, ready: bool, detail: str) -> dict[str, Any]:

return {"name": name, "ready": ready, "detail": detail}

def _check_agent_readiness(app_id: str) -> dict[str, Any]:

dapr_base_url = dapr_http_base_url()

if not dapr_base_url:

return _readiness_entry(

app_id,

False,

"DAPR_HTTP_ENDPOINT or DAPR_HTTP_PORT is not configured.",

)

url = f"{dapr_base_url}/v1.0/invoke/{app_id}/method/agent/instances/__readiness_probe__"

request = urllib.request.Request(url, headers=dapr_json_headers(), method="GET")

try:

with urllib.request.urlopen(request, timeout=3) as response:

if response.status < 300 or response.status == 404:

return _readiness_entry(app_id, True, f"HTTP {response.status}")

body = response.read().decode("utf-8", errors="ignore")

return _readiness_entry(app_id, False, f"HTTP {response.status}: {body}")

except urllib.error.HTTPError as exc:

if exc.code == 404:

return _readiness_entry(app_id, True, "HTTP 404")

body = exc.read().decode("utf-8", errors="ignore") if exc.fp else ""

return _readiness_entry(app_id, False, f"HTTP {exc.code}: {body}".strip())

except (urllib.error.URLError, TimeoutError) as exc:

return _readiness_entry(app_id, False, str(exc))

def _check_workflow_readiness(workflow_client: Any) -> dict[str, Any]:

try:

state = workflow_client.get_workflow_state(

"__readiness_probe__",

fetch_payloads=False,

)

detail = "workflow state API responded"

if state is not None:

detail = "workflow state API responded with probe state"

return _readiness_entry("workflow", True, detail)

except Exception as exc:

return _readiness_entry("workflow", False, f"workflow state API failed: {exc}")

def _check_chat_component_readiness() -> dict[str, Any]:

cached = _get_cached_chat_readiness()

if cached is not None:

return cached

return _refresh_chat_component_readiness()

def _get_cached_chat_readiness() -> dict[str, Any] | None:

with _chat_readiness_cache_lock:

checked_at = _chat_readiness_cache["checked_at"]

result = _chat_readiness_cache["result"]

if result is None:

return None

cache_seconds = CHAT_READINESS_CACHE_SECONDS if result.get("ready") else CHAT_READINESS_FAILURE_CACHE_SECONDS

if time.monotonic() - checked_at > cache_seconds:

return None

return dict(result)

def _refresh_chat_component_readiness() -> dict[str, Any]:

registration = _check_chat_component_registration()

if not registration["ready"]:

_store_chat_readiness(registration)

return registration

last_failure = registration

for attempt in range(1, CHAT_READINESS_MAX_ATTEMPTS + 1):

probe = _probe_chat_component()

if probe["ready"]:

_store_chat_readiness(probe)

return probe

last_failure = _readiness_entry(

CHAT_COMPONENT,

False,

f"{probe['detail']} (attempt {attempt}/{CHAT_READINESS_MAX_ATTEMPTS})",

)

if attempt < CHAT_READINESS_MAX_ATTEMPTS:

time.sleep(min(2, attempt))

_store_chat_readiness(last_failure)

return last_failure

def _store_chat_readiness(result: dict[str, Any]) -> None:

with _chat_readiness_cache_lock:

_chat_readiness_cache["checked_at"] = time.monotonic()

_chat_readiness_cache["result"] = dict(result)

def _check_chat_component_registration() -> dict[str, Any]:

dapr_base_url = dapr_http_base_url()

if not dapr_base_url:

return _readiness_entry(

CHAT_COMPONENT,

False,

"DAPR_HTTP_ENDPOINT or DAPR_HTTP_PORT is not configured.",

)

url = f"{dapr_base_url}/v1.0/metadata"

request = urllib.request.Request(url, headers=dapr_json_headers(), method="GET")

try:

with urllib.request.urlopen(request, timeout=3) as response:

if response.status >= 300:

return _readiness_entry(CHAT_COMPONENT, False, f"HTTP {response.status}")

payload = json.loads(response.read().decode("utf-8"))

components = payload.get("components") if isinstance(payload, dict) else None

if not isinstance(components, list):

return _readiness_entry(CHAT_COMPONENT, False, "Dapr metadata did not include components.")

for component in components:

if isinstance(component, dict) and component.get("name") == CHAT_COMPONENT:

component_type = component.get("type") or "unknown"

return _readiness_entry(CHAT_COMPONENT, True, f"component {component_type}")

return _readiness_entry(CHAT_COMPONENT, False, "Chat component not registered with Dapr.")

except urllib.error.HTTPError as exc:

body = exc.read().decode("utf-8", errors="ignore") if exc.fp else ""

return _readiness_entry(CHAT_COMPONENT, False, f"HTTP {exc.code}: {body}".strip())

except (urllib.error.URLError, TimeoutError, json.JSONDecodeError) as exc:

return _readiness_entry(CHAT_COMPONENT, False, str(exc))

def _probe_chat_component() -> dict[str, Any]:

try:

response = DaprChatClient(

component_name=CHAT_COMPONENT,

timeout=CHAT_READINESS_TIMEOUT_SECONDS,

).generate(

messages=[{"role": "user", "content": "Reply with the single word ok."}],

temperature=0,

)

message = response.get_message() if hasattr(response, "get_message") else None

content = message.content.strip() if message and isinstance(message.content, str) else ""

if content:

return _readiness_entry(CHAT_COMPONENT, True, "Live chat probe succeeded.")

return _readiness_entry(CHAT_COMPONENT, False, "Live chat probe returned an empty response.")

except Exception as exc:

return _readiness_entry(CHAT_COMPONENT, False, f"Live chat probe failed: {exc}")# state_store.py

from __future__ import annotations

from datetime import datetime, timezone

import json

import urllib.error

import urllib.request

from typing import Any

from config import SHIPMENT_STATE_STORE_NAME, logger

from dapr_helpers import dapr_http_base_url, dapr_json_headers

from workflow_runtime import runtime

def _shipment_execution_state_key(task_id: str) -> str:

return f"shipment-execution-plan-{task_id}"

def _shipment_execution_state_record(payload: dict[str, Any]) -> dict[str, Any]:

saved_at = datetime.now(timezone.utc).isoformat().replace("+00:00", "Z")

return {

"taskId": payload["task_id"],

"workflowInstanceId": payload["workflow_instance_id"],

"status": "completed",

"savedAt": saved_at,

"scenario": payload["shipment_scenario"],

"shipmentRequest": payload["shipment_request"],

"carrierOptions": payload["carrier_options"],

"routePlan": payload["route_plan"],

"riskCompliance": payload["risk_compliance"],

"planningBrief": payload["planning_brief"],

"executionPlan": payload["execution_plan"],

"sources": payload["sources"],

}

@runtime.activity(name="save_shipment_execution_state")

def save_shipment_execution_state(_ctx: Any, payload: dict[str, Any]) -> bool:

dapr_base_url = dapr_http_base_url()

if not dapr_base_url:

logger.warning("DAPR_HTTP_ENDPOINT or DAPR_HTTP_PORT is not configured; shipment execution state was not saved.")

return False

task_id = str(payload.get("task_id") or "").strip()

if not task_id:

logger.warning("Shipment execution state was not saved because task_id was empty.")

return False

state_entry = {

"key": _shipment_execution_state_key(task_id),

"value": _shipment_execution_state_record(payload),

}

url = f"{dapr_base_url}/v1.0/state/{SHIPMENT_STATE_STORE_NAME}"

request = urllib.request.Request(

url,

data=json.dumps([state_entry]).encode("utf-8"),

headers=dapr_json_headers(),

method="POST",

)

try:

with urllib.request.urlopen(request, timeout=5) as response:

if response.status >= 300:

logger.warning("Dapr state save returned HTTP %s for shipment execution state.", response.status)

return False

return True

except (urllib.error.URLError, TimeoutError) as exc:

logger.warning("Failed to save shipment execution state: %s", exc)

return False# workflow_helpers.py

from __future__ import annotations

from datetime import timedelta

import json

import re

from typing import Any, Callable

import dapr.ext.workflow as wf

from dapr.ext.workflow import DaprWorkflowContext

from config import (

CHILD_WORKFLOW_MAX_ATTEMPTS,

CHILD_WORKFLOW_RETRY_BACKOFF_COEFFICIENT,

CHILD_WORKFLOW_RETRY_FIRST_INTERVAL_SECONDS,

CHILD_WORKFLOW_TIMEOUT_SECONDS,

logger,

)

from contracts import AgentCallResult

def preview(value: Any, limit: int = 800) -> str:

text = value if isinstance(value, str) else str(value)

text = text.replace("\n", "\\n")

return text if len(text) <= limit else f"{text[:limit]}...<truncated {len(text) - limit} chars>"

def log_stage(stage: str, **values: Any) -> None:

details = " ".join(f"{key}={preview(value)}" for key, value in values.items())

logger.debug("[Orchestrator] %s %s", stage, details)

def _extract_agent_content(agent_result: Any, stage: str) -> str:

if isinstance(agent_result, dict):

content = agent_result.get("content")

if _is_usable_agent_content(content):

return content

if _is_usable_agent_content(agent_result):

return agent_result

raise ValueError(f"{stage} did not return a usable content payload.")

def _is_usable_agent_content(value: Any) -> bool:

return isinstance(value, str) and value.strip().lower() not in {"", "none", "null"}

def _is_partial_tool_call(value: str) -> bool:

normalized = value.strip()

if normalized.startswith("<|python_tag|>"):

normalized = normalized[len("<|python_tag|>") :].strip()

return '"name"' in normalized and '"parameters"' in normalized

def _is_agent_error_content(value: str) -> bool:

normalized = value.strip().lower()

return (

normalized.startswith("error generating response due to previous error")

or normalized.startswith("error executing tool")

or '"iserror": true' in normalized

or "please check and resubmit the logistics planning brief" in normalized

or normalized.startswith("error in ")

or "validation error occurred" in normalized

)

def _is_unusable_agent_output(value: str) -> bool:

return _is_partial_tool_call(value) or _is_agent_error_content(value)

def _fallback_reason_description(reason: str) -> str:

descriptions = {

"timeout": "the child workflow did not finish within the configured timeout",

"llm_tool_call_final": "the agent returned a tool call as the final answer instead of final output",

"tool_error": "the expected tool returned an error payload",

"empty_output": "the agent returned empty output",

"invalid_output": "the agent returned a malformed or unusable payload",

"workflow_error": "the child workflow failed while reading the result",

"retry_exhausted": "all retry attempts were exhausted",

}

return descriptions.get(reason, "usable agent output was not returned")

def _classify_unusable_agent_output(value: str) -> str:

if _is_partial_tool_call(value):

return "llm_tool_call_final"

if _is_agent_error_content(value):

return "tool_error"

if not _is_usable_agent_content(value):

return "empty_output"

return "invalid_output"

def _classify_agent_result_issue(agent_result: Any) -> str:

if isinstance(agent_result, dict):

if agent_result.get("isError") is True:

return "tool_error"

try:

serialized_result = json.dumps(agent_result)

if _is_agent_error_content(serialized_result):

return "tool_error"

except TypeError:

pass

content = agent_result.get("content")

if not _is_usable_agent_content(content):

return "empty_output" if content is None or str(content).strip().lower() in {"", "none", "null"} else "invalid_output"

return _classify_unusable_agent_output(content)

if not _is_usable_agent_content(agent_result):

return "empty_output" if agent_result is None or str(agent_result).strip().lower() in {"", "none", "null"} else "invalid_output"

return _classify_unusable_agent_output(str(agent_result))

def _child_workflow_retry_policy() -> wf.RetryPolicy | None:

if CHILD_WORKFLOW_MAX_ATTEMPTS <= 1:

return None

return wf.RetryPolicy(

first_retry_interval=timedelta(seconds=CHILD_WORKFLOW_RETRY_FIRST_INTERVAL_SECONDS),

max_number_of_attempts=CHILD_WORKFLOW_MAX_ATTEMPTS,

backoff_coefficient=CHILD_WORKFLOW_RETRY_BACKOFF_COEFFICIENT,

)

def _child_workflow_attempt_instance_id(parent_instance_id: str, stage: str, attempt: int) -> str | None:

if attempt == 1:

return None

normalized_stage = "".join(character.lower() if character.isalnum() else "-" for character in stage).strip("-")

return f"{parent_instance_id}:{normalized_stage}:retry-{attempt}"

def _task_result(task: Any) -> Any:

get_result = getattr(task, "get_result", None)

if callable(get_result):

return get_result()

return task.result

def _call_child_workflow_or_fallback(

ctx: DaprWorkflowContext,

*,

workflow: str,

input: dict[str, Any],

app_id: str,

stage: str,

fallback: str,

timeout_seconds: int = CHILD_WORKFLOW_TIMEOUT_SECONDS,

validate_content: Callable[[str], str | None] | None = None,

) -> AgentCallResult:

max_attempts = max(1, CHILD_WORKFLOW_MAX_ATTEMPTS)

retry_policy = _child_workflow_retry_policy()

last_reason = "retry_exhausted"

last_detail = ""

for attempt in range(1, max_attempts + 1):

agent_result: Any = None

child_task = ctx.call_child_workflow(

workflow=workflow,

input=input,

instance_id=_child_workflow_attempt_instance_id(ctx.instance_id, stage, attempt),

retry_policy=retry_policy,

app_id=app_id,

)

timeout_task = ctx.create_timer(timedelta(seconds=timeout_seconds))

winner = yield wf.when_any([child_task, timeout_task])

if winner is timeout_task:

last_reason = "timeout"

last_detail = f"{stage} attempt {attempt}/{max_attempts} timed out after {timeout_seconds}s."

logger.warning(

"%s attempt %s/%s timed out after %ss.",

stage,

attempt,

max_attempts,

timeout_seconds,

)

continue

try:

agent_result = _task_result(child_task)

content = _extract_agent_content(agent_result, stage)

if _is_unusable_agent_output(content):

last_reason = _classify_unusable_agent_output(content)

last_detail = f"{stage} attempt {attempt}/{max_attempts} returned {_fallback_reason_description(last_reason)}."

logger.warning(

"%s attempt %s/%s returned unusable content. reason=%s",

stage,

attempt,

max_attempts,

last_reason,

)

continue

if validate_content is not None:

validation_error = validate_content(content)

if validation_error is not None:

last_reason = "invalid_output"

last_detail = f"{stage} attempt {attempt}/{max_attempts} returned invalid output. {validation_error}"

logger.warning(

"%s attempt %s/%s returned invalid content. reason=%s detail=%s",

stage,

attempt,

max_attempts,

last_reason,

validation_error,

)

continue

logger.info("%s completed with LLM output on attempt %s/%s.", stage, attempt, max_attempts)

return AgentCallResult(content=content, source="llm", attempts=attempt)

except Exception as exc:

last_reason = _classify_agent_result_issue(agent_result) if agent_result is not None else "workflow_error"

last_detail = str(exc)

logger.warning(

"%s attempt %s/%s returned an unusable payload. reason=%s Error: %s",

stage,

attempt,

max_attempts,

last_reason,

exc,

)

logger.warning(

"%s exhausted %s attempt(s); using deterministic fallback. reason=%s detail=%s",

stage,

max_attempts,

last_reason,

last_detail,

)

return AgentCallResult(

content=fallback,

source="fallback",

attempts=max_attempts,

fallback_reason=last_reason,

fallback_detail=last_detail,

)

def _completion_message(message: str, result: AgentCallResult | str) -> str:

source = result.source if isinstance(result, AgentCallResult) else result

if source == "fallback":

reason = result.fallback_reason if isinstance(result, AgentCallResult) else ""

attempts = result.attempts if isinstance(result, AgentCallResult) else CHILD_WORKFLOW_MAX_ATTEMPTS

reason_text = _fallback_reason_description(reason)

return f"{message} Deterministic rules were used after {attempts} attempt(s) because {reason_text}."

return f"{message} LLM response was used."

def _compact_payload(value: str, limit: int = 700) -> str:

if _is_unusable_agent_output(value):

return "Requires manual confirmation from the completed shipment scenario before dispatch."

normalized = " ".join(value.replace("```json", "").replace("```", "").split())

return normalized if len(normalized) <= limit else f"{normalized[:limit].rstrip()}..."

def _fallback_execution_plan(

shipment_scenario: str,

shipment_request: str,

carrier_options: str,

route_plan: str,

risk_compliance: str,

) -> str:

return (

"# Shipment Execution Plan\n\n"

"## Automation Source\n\n"

"Deterministic fallback was used because the final LLM response was unavailable or unusable.\n\n"

"## Shipment Scenario\n\n"

f"{shipment_scenario}\n\n"

"## Normalized Shipment Request\n\n"

f"{_compact_payload(shipment_request)}\n\n"

"## Carrier And Capacity\n\n"

f"{_compact_payload(carrier_options)}\n\n"

"## Route And Schedule\n\n"

f"{_compact_payload(route_plan)}\n\n"

"## Risk And Compliance\n\n"

f"{_compact_payload(risk_compliance)}\n\n"

"## Dispatch Controls\n\n"

"Dispatch should proceed only after required documents, carrier acceptance, pickup "

"appointment, and risk mitigations are confirmed.\n\n"

"## Execution Checklist\n\n"

"- Validate normalized shipment request.\n"

"- Confirm carrier capacity and service level.\n"

"- Confirm route milestones and delivery window.\n"

"- Clear documentation and compliance blockers.\n"

"- Monitor risk triggers and exception thresholds.\n"

"- Send proactive customer updates at pickup, hub departure, out-for-delivery, and delivery."

)

def _normalize_for_presence_check(value: str) -> str:

return " ".join(value.lower().split())

def _scenario_key_terms(value: str) -> set[str]:

ignored_terms = {

"move",

"ship",

"shipment",

"from",

"with",

"using",

"service",

"next",

"week",

"required",

}

return {

term

for term in re.findall(r"[a-z0-9]+", value.lower())

if len(term) >= 4 and term not in ignored_terms

}

def _contains_current_scenario_terms(content: str, shipment_scenario: str) -> bool:

scenario_terms = _scenario_key_terms(shipment_scenario)

if not scenario_terms:

return True

content_terms = _scenario_key_terms(content)

matched_terms = scenario_terms.intersection(content_terms)

required_matches = min(2, max(1, len(scenario_terms) // 3))

return len(matched_terms) >= required_matches

def _has_dangling_json_fragment(content: str) -> bool:

in_fenced_block = False

open_brace_line = False

for raw_line in content.splitlines():

line = raw_line.strip()

if line.startswith("```"):

in_fenced_block = not in_fenced_block

continue

if in_fenced_block:

continue

if open_brace_line and line.startswith("##"):

return True

if line == "{":

open_brace_line = True

continue

if open_brace_line and line == "}":

open_brace_line = False

return open_brace_line

def _validate_final_execution_plan(content: str, shipment_scenario: str) -> str | None:

normalized_content = _normalize_for_presence_check(content)

if "shipment execution plan" not in normalized_content:

return "The final plan did not include the expected title."

if not _contains_current_scenario_terms(content, shipment_scenario):

return "The final plan did not include the current shipment scenario."

if _has_dangling_json_fragment(content):

return "The final plan contained a dangling JSON fragment."

return None

def _get_workflow_input(workflow_input: Any) -> tuple[str, str, dict[str, str]]:

if isinstance(workflow_input, dict):

task = workflow_input.get("task")

task_id = workflow_input.get("task_id")

trace_context = workflow_input.get("trace_context")

if isinstance(task, str) and task.strip():

normalized_task_id = task_id.strip() if isinstance(task_id, str) and task_id.strip() else ""

return task.strip(), normalized_task_id, trace_context if isinstance(trace_context, dict) else {}

if isinstance(workflow_input, str) and workflow_input.strip():

return workflow_input.strip(), "", {}

raise ValueError("Workflow input did not include a usable task.")

def _build_planning_brief(

shipment_scenario: str,

shipment_request: str,

carrier_options: str,

route_plan: str,

risk_compliance: str,

source_notes: dict[str, str] | None = None,

) -> str:

source_notes = source_notes or {}

automation_sources = "\n".join(

f"- {stage}: {source_notes.get(stage, 'llm')}"

for stage in ("order_intake", "carrier_capacity", "route_planning", "risk_compliance")

)

return (

"## Logistics Planning Brief\n\n"

f"### Original Scenario\n{shipment_scenario}\n\n"

f"### Automation Sources\n{automation_sources}\n\n"

f"### Normalized Shipment Request\n```json\n{shipment_request}\n```\n\n"

f"### Feasible Carrier Options\n```json\n{carrier_options}\n```\n\n"

f"### Recommended Route And Schedule\n```json\n{route_plan}\n```\n\n"

f"### Risk And Compliance Assessment\n```json\n{risk_compliance}\n```\n\n"

"### Planning Decision\n"

"Proceed with the top-ranked feasible carrier when documents and pickup readiness are confirmed. "

"Escalate to the alternate route if risk mitigation cannot be completed before dispatch."

)# workflow_runtime.py

from __future__ import annotations

import logging

import sys

import dapr.ext.workflow as wf

from dapr.ext.workflow.logger import LoggerOptions

class DurableTaskNoiseFilter(logging.Filter):

_ignored_messages = (

"Ignoring unexpected taskCompleted event with ID",

"Ignoring unexpected timerFired event with ID",

)

def filter(self, record: logging.LogRecord) -> bool:

if record.name != "durabletask-worker":

return True

message = record.getMessage()

return not any(ignored in message for ignored in self._ignored_messages)

def configure_workflow_loggers() -> None:

for logger_name in ("WorkflowRuntime", "durabletask-worker"):

logging.getLogger(logger_name).propagate = False

def create_workflow_logger_options() -> LoggerOptions:

configure_workflow_loggers()

handler = logging.StreamHandler(sys.stdout)

handler.addFilter(DurableTaskNoiseFilter())

return LoggerOptions(log_handler=handler)

runtime = wf.WorkflowRuntime(logger_options=create_workflow_logger_options())# workflow.py

from __future__ import annotations

from typing import Any

from dapr.ext.workflow import DaprWorkflowContext

from opentelemetry.trace import Status, StatusCode

from config import (

CARRIER_CAPACITY_APP_ID,

CARRIER_CAPACITY_WORKFLOW_NAME,

CUSTOMER_UPDATE_WRITER_APP_ID,

CUSTOMER_UPDATE_WRITER_WORKFLOW_NAME,

ORDER_INTAKE_APP_ID,

ORDER_INTAKE_WORKFLOW_NAME,

RISK_COMPLIANCE_APP_ID,

RISK_COMPLIANCE_WORKFLOW_NAME,

ROUTE_PLANNING_APP_ID,

ROUTE_PLANNING_WORKFLOW_NAME,

)

from shared.observability import record_workflow_event

from progress import _workflow_progress_event, publish_shipment_progress

from state_store import save_shipment_execution_state

from workflow_helpers import (

_build_planning_brief,

_call_child_workflow_or_fallback,

_completion_message,

_fallback_execution_plan,

_get_workflow_input,

_validate_final_execution_plan,

)

from workflow_runtime import runtime

@runtime.workflow(name="shipment_execution_workflow")

def shipment_execution_workflow(ctx: DaprWorkflowContext, workflow_input: Any) -> str:

"""Run the five logistics agents as child workflows."""

shipment_scenario, task_id, trace_context = _get_workflow_input(workflow_input)

correlation_id = task_id or ctx.instance_id

yield ctx.call_activity(

publish_shipment_progress,

input=_workflow_progress_event(

correlation_id,

ctx.instance_id,

"workflow",

"started",

"Shipment execution workflow started.",

4,

),

)

record_workflow_event(

"orchestrator.shipment_execution_workflow.started",

trace_context,

workflow_id=ctx.instance_id,

)

try:

yield ctx.call_activity(

publish_shipment_progress,

input=_workflow_progress_event(

correlation_id,

ctx.instance_id,

"order-intake",

"started",

"Order intake is normalizing the shipment request.",

10,

),

)

record_workflow_event(

"orchestrator.call_order_intake.scheduled",

trace_context,

workflow_id=ctx.instance_id,

target_app_id=ORDER_INTAKE_APP_ID,

)

shipment_request_result = yield from _call_child_workflow_or_fallback(

ctx,

workflow=ORDER_INTAKE_WORKFLOW_NAME,

input={"task": shipment_scenario, "_otel_span_context": trace_context},

app_id=ORDER_INTAKE_APP_ID,

stage="Order intake",

fallback=(

"Use the original shipment scenario as the canonical request. "

f"Scenario: {shipment_scenario}"

),

)

shipment_request = shipment_request_result.content

yield ctx.call_activity(

publish_shipment_progress,

input=_workflow_progress_event(

correlation_id,

ctx.instance_id,

"order-intake",

"completed",

_completion_message("Order intake produced the canonical shipment request.", shipment_request_result),

20,

shipment_request_result.source,

shipment_request_result.fallback_reason,

shipment_request_result.attempts,

shipment_request_result.fallback_detail,

),

)

record_workflow_event(

"orchestrator.call_order_intake.completed",

trace_context,

workflow_id=ctx.instance_id,

target_app_id=ORDER_INTAKE_APP_ID,

)

carrier_task = f"shipment_request:\n{shipment_request}"

yield ctx.call_activity(

publish_shipment_progress,

input=_workflow_progress_event(

correlation_id,

ctx.instance_id,

"carrier-capacity",

"started",

"Carrier capacity is ranking feasible options.",

28,

),

)

record_workflow_event(

"orchestrator.call_carrier_capacity.scheduled",

trace_context,

workflow_id=ctx.instance_id,

target_app_id=CARRIER_CAPACITY_APP_ID,

)

carrier_options_result = yield from _call_child_workflow_or_fallback(

ctx,

workflow=CARRIER_CAPACITY_WORKFLOW_NAME,

input={"task": carrier_task, "_otel_span_context": trace_context},

app_id=CARRIER_CAPACITY_APP_ID,

stage="Carrier capacity",

fallback=(

"Carrier capacity requires manual confirmation. Confirm full truckload "

"availability, equipment fit, pickup appointment, service level, and carrier acceptance."

),

)

carrier_options = carrier_options_result.content

yield ctx.call_activity(

publish_shipment_progress,

input=_workflow_progress_event(

correlation_id,

ctx.instance_id,

"carrier-capacity",

"completed",

_completion_message("Carrier capacity returned ranked options.", carrier_options_result),

40,

carrier_options_result.source,

carrier_options_result.fallback_reason,

carrier_options_result.attempts,

carrier_options_result.fallback_detail,

),

)

record_workflow_event(

"orchestrator.call_carrier_capacity.completed",

trace_context,

workflow_id=ctx.instance_id,

target_app_id=CARRIER_CAPACITY_APP_ID,

)

route_task = (

f"shipment_request:\n{shipment_request}\n\n"

f"carrier_options:\n{carrier_options}"

)

yield ctx.call_activity(

publish_shipment_progress,

input=_workflow_progress_event(

correlation_id,

ctx.instance_id,

"route-planning",

"started",

"Route planning is building the movement plan.",

48,

),

)

record_workflow_event(

"orchestrator.call_route_planning.scheduled",

trace_context,

workflow_id=ctx.instance_id,

target_app_id=ROUTE_PLANNING_APP_ID,

)

route_plan_result = yield from _call_child_workflow_or_fallback(

ctx,

workflow=ROUTE_PLANNING_WORKFLOW_NAME,

input={"task": route_task, "_otel_span_context": trace_context},

app_id=ROUTE_PLANNING_APP_ID,

stage="Route planning",

fallback=(

"Route planning requires manual confirmation. Confirm pickup, linehaul, "

"delivery appointment, ETA, route milestones, and fallback routing before dispatch."

),

)

route_plan = route_plan_result.content

yield ctx.call_activity(

publish_shipment_progress,

input=_workflow_progress_event(

correlation_id,

ctx.instance_id,

"route-planning",

"completed",

_completion_message("Route planning returned milestones and ETA.", route_plan_result),

60,

route_plan_result.source,

route_plan_result.fallback_reason,

route_plan_result.attempts,

route_plan_result.fallback_detail,

),

)

record_workflow_event(

"orchestrator.call_route_planning.completed",

trace_context,

workflow_id=ctx.instance_id,

target_app_id=ROUTE_PLANNING_APP_ID,

)

risk_task = (

f"shipment_request:\n{shipment_request}\n\n"

f"route_plan:\n{route_plan}"

)

yield ctx.call_activity(

publish_shipment_progress,

input=_workflow_progress_event(

correlation_id,

ctx.instance_id,

"risk-compliance",

"started",

"Risk and compliance is checking blockers and mitigations.",

68,

),

)

record_workflow_event(

"orchestrator.call_risk_compliance.scheduled",

trace_context,

workflow_id=ctx.instance_id,

target_app_id=RISK_COMPLIANCE_APP_ID,

)

risk_compliance_result = yield from _call_child_workflow_or_fallback(

ctx,

workflow=RISK_COMPLIANCE_WORKFLOW_NAME,

input={"task": risk_task, "_otel_span_context": trace_context},

app_id=RISK_COMPLIANCE_APP_ID,

stage="Risk compliance",

fallback=(

"Risk and compliance require manual confirmation. Verify bill of lading, "

"cargo requirements, insurance, restrictions, temperature or handling controls, "

"and exception triggers before dispatch."

),

)

risk_compliance = risk_compliance_result.content

yield ctx.call_activity(

publish_shipment_progress,

input=_workflow_progress_event(

correlation_id,

ctx.instance_id,

"risk-compliance",

"completed",

_completion_message("Risk and compliance returned documents, restrictions, and mitigations.", risk_compliance_result),

80,

risk_compliance_result.source,

risk_compliance_result.fallback_reason,

risk_compliance_result.attempts,

risk_compliance_result.fallback_detail,

),

)

record_workflow_event(

"orchestrator.call_risk_compliance.completed",

trace_context,

workflow_id=ctx.instance_id,

target_app_id=RISK_COMPLIANCE_APP_ID,

)

planning_brief = _build_planning_brief(

shipment_scenario,

shipment_request,

carrier_options,

route_plan,

risk_compliance,

{

"order_intake": shipment_request_result.source,

"carrier_capacity": carrier_options_result.source,

"route_planning": route_plan_result.source,

"risk_compliance": risk_compliance_result.source,

},

)

customer_update_task = (

"Turn the following logistics planning brief into a shipment execution plan "

"with a concise customer-facing update.\n\n"

f"scenario:\n{shipment_scenario}\n\n"

f"planning_brief:\n{planning_brief}"

)

yield ctx.call_activity(

publish_shipment_progress,

input=_workflow_progress_event(

correlation_id,

ctx.instance_id,

"customer-update-writer",

"started",

"Customer update writer is assembling the execution plan.",

88,

),

)

record_workflow_event(

"orchestrator.call_customer_update_writer.scheduled",

trace_context,

workflow_id=ctx.instance_id,

target_app_id=CUSTOMER_UPDATE_WRITER_APP_ID,

)

execution_plan_result = yield from _call_child_workflow_or_fallback(

ctx,

workflow=CUSTOMER_UPDATE_WRITER_WORKFLOW_NAME,

input={"task": customer_update_task, "_otel_span_context": trace_context},

app_id=CUSTOMER_UPDATE_WRITER_APP_ID,

stage="Customer update writer",

fallback=_fallback_execution_plan(

shipment_scenario,

shipment_request,

carrier_options,

route_plan,

risk_compliance,

),

validate_content=lambda content: _validate_final_execution_plan(content, shipment_scenario),

)

execution_plan = execution_plan_result.content

yield ctx.call_activity(

publish_shipment_progress,

input=_workflow_progress_event(

correlation_id,

ctx.instance_id,

"customer-update-writer",

"completed",

_completion_message("Customer update writer returned the final execution plan.", execution_plan_result),

96,

execution_plan_result.source,

execution_plan_result.fallback_reason,

execution_plan_result.attempts,

execution_plan_result.fallback_detail,

),

)

record_workflow_event(

"orchestrator.call_customer_update_writer.completed",

trace_context,

workflow_id=ctx.instance_id,

target_app_id=CUSTOMER_UPDATE_WRITER_APP_ID,

)

yield ctx.call_activity(

save_shipment_execution_state,

input={

"task_id": correlation_id,

"workflow_instance_id": ctx.instance_id,

"shipment_scenario": shipment_scenario,

"shipment_request": shipment_request,

"carrier_options": carrier_options,

"route_plan": route_plan,

"risk_compliance": risk_compliance,

"planning_brief": planning_brief,

"execution_plan": execution_plan,

"sources": {

"order_intake": shipment_request_result.source,

"carrier_capacity": carrier_options_result.source,

"route_planning": route_plan_result.source,

"risk_compliance": risk_compliance_result.source,

"customer_update_writer": execution_plan_result.source,

},

},

)

record_workflow_event(

"orchestrator.shipment_execution_workflow.completed",

trace_context,

workflow_id=ctx.instance_id,

)

yield ctx.call_activity(

publish_shipment_progress,

input=_workflow_progress_event(

correlation_id,

ctx.instance_id,

"workflow",

"completed",

"Shipment execution workflow completed.",

100,

),

)

return execution_plan

except Exception as exc:

yield ctx.call_activity(

publish_shipment_progress,

input=_workflow_progress_event(

correlation_id,

ctx.instance_id,

"workflow",

"failed",

str(exc),

100,

),

)

record_workflow_event(

"orchestrator.shipment_execution_workflow.failed",

trace_context,

workflow_id=ctx.instance_id,

status=Status(StatusCode.ERROR, str(exc)),

)

raiseOrder Intake - Agent

It extracts lane details, cargo information, volume, service level, delivery windows, equipment requirements, and handling constraints, enabling downstream agents to operate on structured shipment data rather than raw user input.

# pyproject.toml

[project]

name = "agent-order-intake"

version = "0.1.0"

requires-python = ">=3.11,<3.14"

dependencies = [

"dapr-agents==1.0.0",

"dapr==1.17.3",

"opentelemetry-api==1.41.1",

"opentelemetry-sdk==1.41.1",

"opentelemetry-exporter-otlp-proto-grpc==1.41.1",

"python-dotenv==1.0.0",

"uvicorn[standard]==0.31.1",

]# app.py

from __future__ import annotations

import json

import re

import sys

from pathlib import Path

from typing import Any

sys.path.append(str(Path(__file__).resolve().parents[1]))

from pydantic import BaseModel, Field

from dapr_agents import DurableAgent

from dapr_agents.agents.configs import AgentExecutionConfig, ToolExecutionMode

from dapr_agents.llm.dapr import DaprChatClient

from dapr_agents.tool import AgentTool

from dapr_agents.workflow.runners import AgentRunner

from shared.observability import (

reset_tool_trace_context,

set_tool_trace_context,

start_span,

)

from shared.agent_config import configure_agent_environment

from shared.agent_host import (

create_agent_app,

run_agent_service,

)

from shared.logging_utils import create_stage_logger

from shared.tool_recovery import (

get_last_tool_content as _get_last_tool_content,

looks_like_tool_call_content as _looks_like_tool_call_content,

replace_response_with_tool_output,

strip_agent_markup as _strip_agent_markup,

tool_call_content_from_response as _tool_call_content_from_response,

)

from shared.workflow_runtime import create_workflow_runtime

runtime_config = configure_agent_environment(

service_name="order-intake",

logger_name="order_intake.app",

default_port=50051,

)

logger = runtime_config.logger

UVICORN_LOG_CONFIG = runtime_config.uvicorn_log_config

AGENT_PORT = runtime_config.agent_port

CHAT_COMPONENT = runtime_config.chat_component

WORKFLOW_TRACE_CONTEXTS: dict[str, dict[str, str]] = {}

log_stage = create_stage_logger(logger, "OrderIntake")

class ShipmentScenarioToolArgs(BaseModel):

scenario: str = Field(..., description="Shipment scenario or customer request to normalize.")

class TracedOrderIntakeAgent(DurableAgent):

def record_initial_entry(self, ctx: Any, payload: dict[str, Any]) -> None:

trace_context = payload.get("trace_context")

if isinstance(trace_context, dict):

WORKFLOW_TRACE_CONTEXTS[ctx.workflow_id] = {

str(key): str(value) for key, value in trace_context.items()

}

token = set_tool_trace_context(WORKFLOW_TRACE_CONTEXTS.get(ctx.workflow_id))

try:

with start_span(

"order_intake.workflow.started",

workflow_id=ctx.workflow_id,

source=payload.get("source"),

triggering_workflow_instance_id=payload.get("triggering_workflow_instance_id"),

):

super().record_initial_entry(ctx, payload)

finally:

reset_tool_trace_context(token)

def call_llm(self, ctx: Any, payload: dict[str, Any]) -> dict[str, Any]:

token = set_tool_trace_context(WORKFLOW_TRACE_CONTEXTS.get(ctx.workflow_id))

try:

with start_span(

"order_intake.llm.call",

workflow_id=ctx.workflow_id,

source=payload.get("source"),

):

response = super().call_llm(ctx, payload)

tool_output = self._get_last_tool_content(payload.get("instance_id"), "NormalizeShipmentRequest")

if tool_output is None:

tool_output = _normalize_from_tool_call_content(response, ctx, payload.get("task"))

return replace_response_with_tool_output(

response,

tool_output,

"OrderIntake",

logger,

)

finally:

reset_tool_trace_context(token)

def run_tool(self, ctx: Any, payload: dict[str, Any]) -> dict[str, Any]:

token = set_tool_trace_context(WORKFLOW_TRACE_CONTEXTS.get(ctx.workflow_id))

try:

tool_call = payload.get("tool_call", {})

function = tool_call.get("function", {}) if isinstance(tool_call, dict) else {}

with start_span(

"order_intake.tool.run",

workflow_id=ctx.workflow_id,

tool_name=function.get("name"),

tool_call_id=tool_call.get("id") if isinstance(tool_call, dict) else None,

):

return super().run_tool(ctx, payload)

finally:

reset_tool_trace_context(token)

def finalize_workflow(self, ctx: Any, payload: dict[str, Any]) -> None:

token = set_tool_trace_context(WORKFLOW_TRACE_CONTEXTS.get(ctx.workflow_id))

try:

with start_span(

"order_intake.workflow.finalized",

workflow_id=ctx.workflow_id,

triggering_workflow_instance_id=payload.get("triggering_workflow_instance_id"),

):

super().finalize_workflow(ctx, payload)

finally:

reset_tool_trace_context(token)

WORKFLOW_TRACE_CONTEXTS.pop(ctx.workflow_id, None)

def summarize(self, ctx: Any, payload: dict[str, Any]) -> dict[str, Any]:

try:

return super().summarize(ctx, payload)

except Exception as exc:

logger.warning("Skipping non-critical memory summary: %s", exc)

return {}

def _get_last_tool_content(self, instance_id: Any, tool_name: str) -> str | None:

if not isinstance(instance_id, str) or not instance_id.strip():

return None

try:

entry = self._infra.get_state(instance_id)

except Exception as exc:

logger.warning("Could not load OrderIntake state to recover tool output: %s", exc)

return None

return _get_last_tool_content(entry, tool_name)

def _normalize_from_tool_call_content(

response: dict[str, Any],

ctx: Any | None = None,

fallback_task: Any | None = None,

) -> str | None:

tool_call_content = _tool_call_content_from_response(response, "NormalizeShipmentRequest")

if tool_call_content is None:

return None

parameters = _tool_call_parameters(tool_call_content, "NormalizeShipmentRequest")

scenario = None

if parameters is not None:

scenario = (

parameters.get("scenario")

or parameters.get("task")

or parameters.get("shipment_scenario")

or parameters.get("request")

)

if (

isinstance(scenario, str)

and isinstance(fallback_task, str)

and _looks_like_malformed_structured_scenario(scenario)

):

scenario = fallback_task

if not isinstance(scenario, str) or not scenario.strip():

scenario = fallback_task

if not isinstance(scenario, str) or not scenario.strip():

return None

return normalize_shipment_request(scenario.strip(), ctx=ctx)

def _looks_like_malformed_structured_scenario(scenario: str) -> bool:

stripped = scenario.strip()

if not stripped:

return False

if stripped[0] not in "{[":

return False

try:

parsed = json.loads(stripped)

except json.JSONDecodeError:

return True

return isinstance(parsed, (dict, list))

def _tool_call_parameters(content: Any, tool_name: str) -> dict[str, Any] | None:

if not _looks_like_tool_call_content(content, tool_name):

return None

stripped = _strip_agent_markup(str(content))

try:

payload = json.loads(stripped)

except json.JSONDecodeError:

payload = _parse_function_style_tool_call(stripped, tool_name)

if not isinstance(payload, dict) or payload.get("name") != tool_name:

return None

parameters = payload.get("parameters") or payload.get("arguments") or payload.get("args")

function = payload.get("function")

if isinstance(function, dict):

parameters = function.get("arguments") or function.get("parameters") or parameters

if function.get("name") not in {None, tool_name}:

return None

if isinstance(parameters, str) and parameters.strip():

try:

parsed_parameters = json.loads(_strip_agent_markup(parameters))

except json.JSONDecodeError:

return None

parameters = parsed_parameters

return parameters if isinstance(parameters, dict) else None

def _parse_function_style_tool_call(content: str, tool_name: str) -> dict[str, Any] | None:

match = re.fullmatch(rf"{re.escape(tool_name)}\s*\((.*)\)", content, flags=re.DOTALL)

if not match:

return None

raw_arguments = match.group(1).strip()

if not raw_arguments:

return {"name": tool_name, "parameters": {}}

if raw_arguments.startswith("{"):

try:

parsed = json.loads(raw_arguments)

except json.JSONDecodeError:

return None

return {"name": tool_name, "parameters": parsed} if isinstance(parsed, dict) else None

keyword_match = re.fullmatch(r"scenario\s*=\s*(.+)", raw_arguments, flags=re.DOTALL)

if keyword_match:

try:

scenario = json.loads(keyword_match.group(1).strip())

except json.JSONDecodeError:

scenario = keyword_match.group(1).strip().strip("\"'")

return {"name": tool_name, "parameters": {"scenario": scenario}}

try:

scenario = json.loads(raw_arguments)

except json.JSONDecodeError:

scenario = raw_arguments.strip("\"'")

return {"name": tool_name, "parameters": {"scenario": scenario}}

def _resolve_task_id(task_id: str | None = None, ctx: Any | None = None) -> str:

workflow_id = getattr(ctx, "workflow_id", None)

if isinstance(workflow_id, str) and workflow_id.strip():

return workflow_id.strip()

if isinstance(task_id, str) and task_id.strip():

return task_id.strip()

return "unknown"

def normalize_shipment_request(

scenario: str,

task_id: str = "unknown",

ctx: Any | None = None,

_source_agent: str | None = None,

) -> str:

"""Parse a shipment scenario into a canonical shipment request."""

task_id = _resolve_task_id(task_id, ctx)

with start_span("order_intake.tool.normalize_shipment_request", task_id=task_id):

log_stage("tool input", tool="normalize_shipment_request", task_id=task_id, scenario=scenario)

request = _build_shipment_request(scenario)

result = json.dumps(request, indent=2)

log_stage("tool output", tool="normalize_shipment_request", task_id=task_id, request=request)

return result

def _build_shipment_request(scenario: str) -> dict[str, Any]:

text = scenario.strip()

lower = text.lower()

origin, destination = _extract_lane(text)

cargo_type = _classify_cargo(lower)

weight_lbs = _extract_weight_lbs(lower)

shipment_type = _classify_shipment_type(lower, weight_lbs)

return {

"raw_request": text,

"origin": origin,

"destination": destination,

"cargo_type": cargo_type,

"weight_lbs": weight_lbs,

"volume": _extract_volume(lower),

"service_level": "expedited" if any(word in lower for word in ("expedite", "expedited", "urgent", "same day")) else "standard",

"shipment_type": shipment_type,

"cross_border": any(word in lower for word in ("customs", "cross-border", "cross border", "canada", "mexico")),

"delivery_window": _extract_delivery_window(text),

"required_fields_valid": bool(origin and destination and cargo_type),

}

def _extract_lane(text: str) -> tuple[str, str]:

lane_match = re.search(

r"\bfrom\s+(.+?)\s+to\s+(.+?)(?:\s+by\s+|\s+with\s+|\s+using\s+|\s+next\s+week\b|\s+for\s+|$)",

text,

flags=re.IGNORECASE,

)

if lane_match:

return lane_match.group(1).strip(" ,."), lane_match.group(2).strip(" ,.")

return "unspecified origin", "unspecified destination"

def _classify_cargo(lower: str) -> str:

if "hazmat" in lower or "hazardous" in lower:

return "hazmat"

if "reefer" in lower or "refrigerated" in lower or "temperature" in lower:

return "reefer"

if "return" in lower:

return "returns"

if "parcel" in lower:

return "parcel"

return "general freight"

def _extract_weight_lbs(lower: str) -> int | None:

match = re.search(r"(\d[\d,]*(?:\.\d+)?)\s*(lb|lbs|pound|pounds|kg|kgs|kilogram|kilograms)", lower)

if not match:

return None

value = float(match.group(1).replace(",", ""))

unit = match.group(2)

return round(value * 2.20462) if unit.startswith("kg") or unit.startswith("kilogram") else round(value)

def _extract_volume(lower: str) -> str:

pallets = re.search(r"(\d+)\s+pallet", lower)

if pallets:

return f"{pallets.group(1)} pallets"

cartons = re.search(r"(\d+)\s+(carton|box|boxes)", lower)

if cartons:

return f"{cartons.group(1)} {cartons.group(2)}"

return "unspecified"

def _classify_shipment_type(lower: str, weight_lbs: int | None) -> str:

if "ftl" in lower or "full truckload" in lower or (weight_lbs is not None and weight_lbs >= 15000):

return "FTL"

if "parcel" in lower or (weight_lbs is not None and weight_lbs <= 150):

return "parcel"

return "LTL"

def _extract_delivery_window(text: str) -> str:

by_match = re.search(

r"\bby\s+(.+?)(?:\s+using\s+|\s+with\s+|\s+expedited\b|[,.]|$)",

text,

flags=re.IGNORECASE,

)

if by_match:

return by_match.group(1).strip()

next_week_match = re.search(r"\bnext\s+week\b", text, flags=re.IGNORECASE)

if next_week_match:

return "next week"

return "not specified"

NORMALIZE_SHIPMENT_REQUEST_TOOL = AgentTool(

name="NormalizeShipmentRequest",

description=normalize_shipment_request.__doc__ or "",

func=normalize_shipment_request,

args_model=ShipmentScenarioToolArgs,

)

def create_order_intake_agent() -> DurableAgent:

"""Build the order intake durable agent with its normalization tool."""

return TracedOrderIntakeAgent(

name="order-intake-agent",

role="Order Intake Specialist",

goal="Normalize a shipment scenario into a canonical shipment request.",

instructions=[

"Use exactly one tool call per assistant turn until the normalized shipment request is complete.",

"Follow this state machine strictly:",

"1. If NormalizeShipmentRequest has not returned, call NormalizeShipmentRequest with the requested scenario.",

"2. After NormalizeShipmentRequest returns, do not call another tool. Return the normalized shipment request exactly as the final answer.",

"Do not skip steps, combine steps, reorder steps, or emit conversational commentary between tool calls.",

"Do not include task_id in tool arguments.",

],

tools=[

NORMALIZE_SHIPMENT_REQUEST_TOOL,

],

llm=DaprChatClient(component_name=CHAT_COMPONENT),

runtime=create_workflow_runtime(),

execution=AgentExecutionConfig(

max_iterations=4,

tool_choice="auto",

tool_execution_mode=ToolExecutionMode.SEQUENTIAL,

),

)

def main() -> None:

runner = AgentRunner()

order_intake = create_order_intake_agent()

app = create_agent_app(

runner=runner,

agent=order_intake,

title="Order Intake Agent Service",

)

run_agent_service(

runner=runner,

agent=order_intake,

app=app,

port=AGENT_PORT,

uvicorn_log_config=UVICORN_LOG_CONFIG,

log_stage=log_stage,

chat_component=CHAT_COMPONENT,

)

if __name__ == "__main__":

try:

main()

except KeyboardInterrupt:

passCarrier Capacity - Agent

It uses the normalized shipment request to evaluate mode, service fit, capacity availability, temperature handling, and operational tradeoffs, then returns ranked carrier choices for route planning.

# pyproject.toml

[project]

name = "agent-carrier-capacity"

version = "0.1.0"

requires-python = ">=3.11,<3.14"

dependencies = [

"dapr-agents==1.0.0",

"dapr==1.17.3",

"opentelemetry-api==1.41.1",

"opentelemetry-sdk==1.41.1",

"opentelemetry-exporter-otlp-proto-grpc==1.41.1",

"python-dotenv==1.0.0",

"uvicorn[standard]==0.31.1",

]# app.py

from __future__ import annotations

import json

import sys

from pathlib import Path

from typing import Any

sys.path.append(str(Path(__file__).resolve().parents[1]))

from pydantic import BaseModel, Field

from dapr_agents import DurableAgent

from dapr_agents.agents.configs import AgentExecutionConfig, ToolExecutionMode

from dapr_agents.llm.dapr import DaprChatClient

from dapr_agents.tool import AgentTool

from dapr_agents.workflow.runners import AgentRunner

from shared.observability import (

reset_tool_trace_context,

set_tool_trace_context,

start_span,

)

from shared.agent_config import configure_agent_environment

from shared.agent_host import (

create_agent_app,

run_agent_service,

)

from shared.json_payloads import (

loads_object as _loads_object,

)

from shared.logging_utils import create_stage_logger

from shared.tool_recovery import (

get_last_tool_content as _get_last_tool_content,

replace_response_with_tool_output,

tool_call_content_from_response as _tool_call_content_from_response,

)

from shared.workflow_runtime import create_workflow_runtime

runtime_config = configure_agent_environment(

service_name="carrier-capacity",

logger_name="carrier_capacity.app",

default_port=50052,

)

logger = runtime_config.logger

UVICORN_LOG_CONFIG = runtime_config.uvicorn_log_config

AGENT_PORT = runtime_config.agent_port

CHAT_COMPONENT = runtime_config.chat_component